Content Moderation on News and Social Media

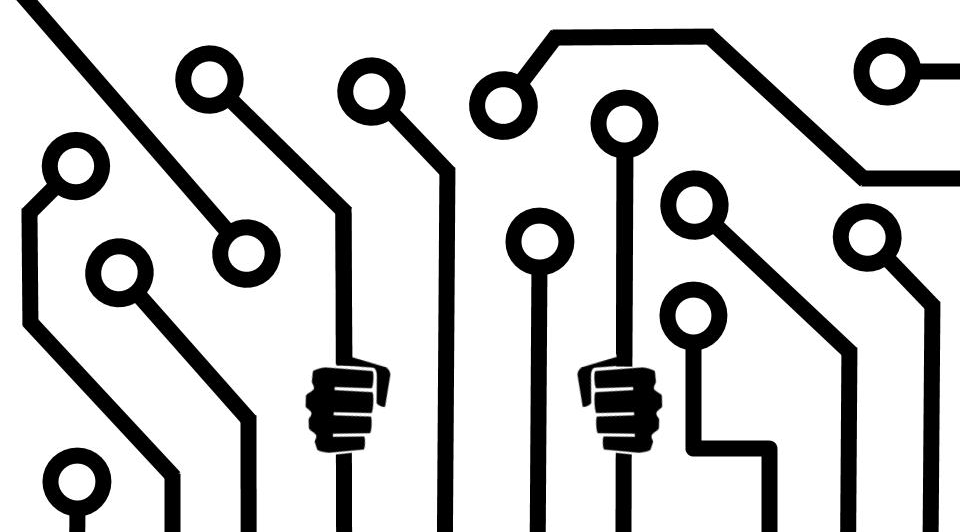

AI tools are used to spot potentially harmful comments, posts and content and remove them from discussion boards and social media platforms. These tools may often misconstrue language that is culturally different, effectively censoring people’s voices.