Twitter image cropping

Another reminder that bias, testing, diversity is needed in machine learning: Twitter’s image-crop AI may favour white men, women’s chests Digital imagery favours white people in framing and de-emphasises the visibility of non-white people. Strange, it didn’t show up during development, says social network

Read More

Google Cloud’s image tagging AI

Google Cloud’s AI recog code ‘biased’ against black people – and more from ML land Including: Yes, that nightmare smart toilet that photographs you mid… er, process Digital imagery tagging provides negative context for non white people.

Read More

Image processing

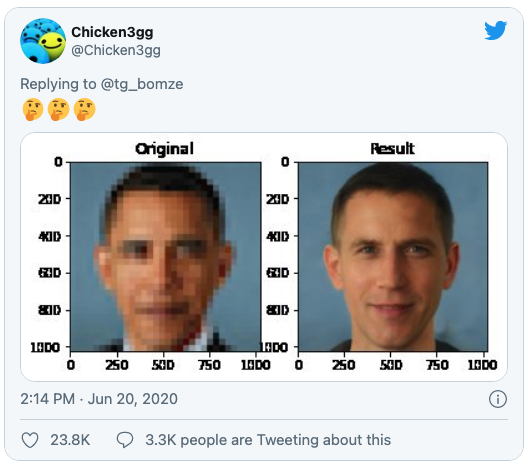

Once again, racial biases show up in AI image databases, this time turning Barack Obama white Researchers used a pre-trained off-the-shelf model from Nvidia. Digital imagery tagging provides negative context for non white people.

Read More