AI Making Legal Judgements

Algorithms become the arbiters of determinations about individuals (e.g. government benefits, granting licenses, pre-sentencing and sentencing, granting parole). Whilst AI tools may be used to mitigate human biases and for speed and lower costs of trials, there is evidence that it may enforce biases by using characteristics such as postcode or social economic level as a proxy for ethnicity.

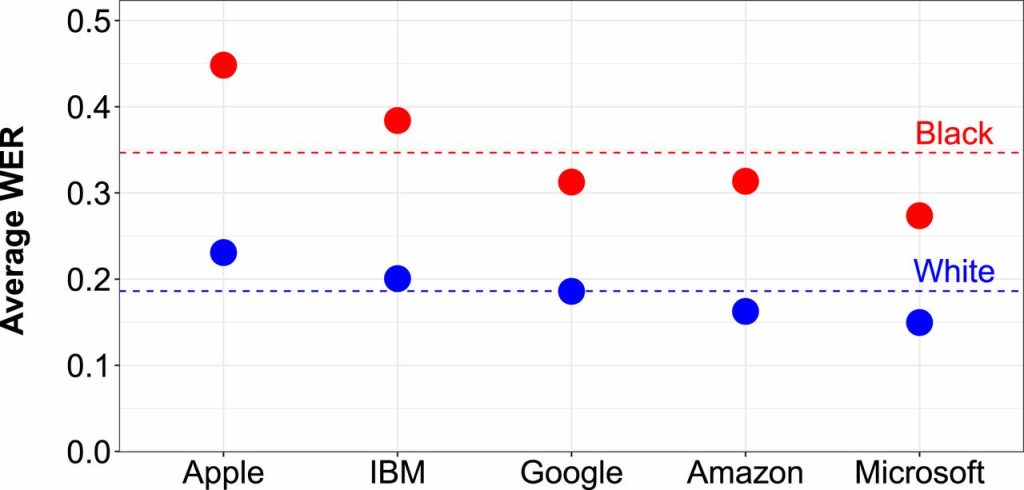

The use of commercial AI tools such speech recognition – which have been shown to be less reliable for non-white speakers – can actively harm some groups when criminal justice agencies use them to transcribe courtroom proceedings.