Digital Media Manipulation and Processing

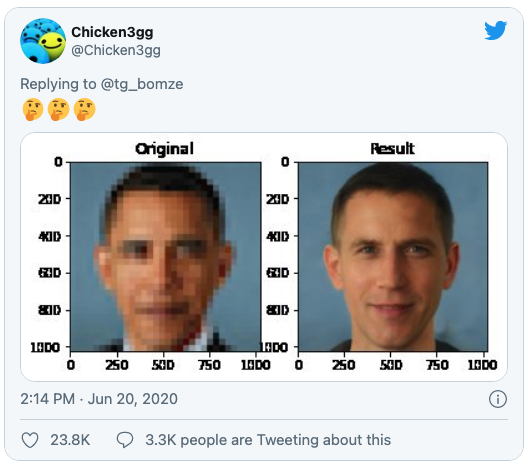

When AI is used for changing an image or video file, such as cropping it automatically, any bias built in can adapt that image unfavourably. Cropping, for example, may favour lighter skinned people and reduce the visibility of darker skinned people. This gives a false impression of reality through an apparent reality-based medium (seeing is believing).

Tagging media files with descriptive keywords and search terms also impacts how they are displayed in search results, and can mislead if tags used to retrieve an image are unfavourable or prejudiced. All the above relate to forms of media file manipulation or processing.