Blog

With so much buzz about AI at present, we invite blog post contributions which demystify key topics.

And look out for our news updates!

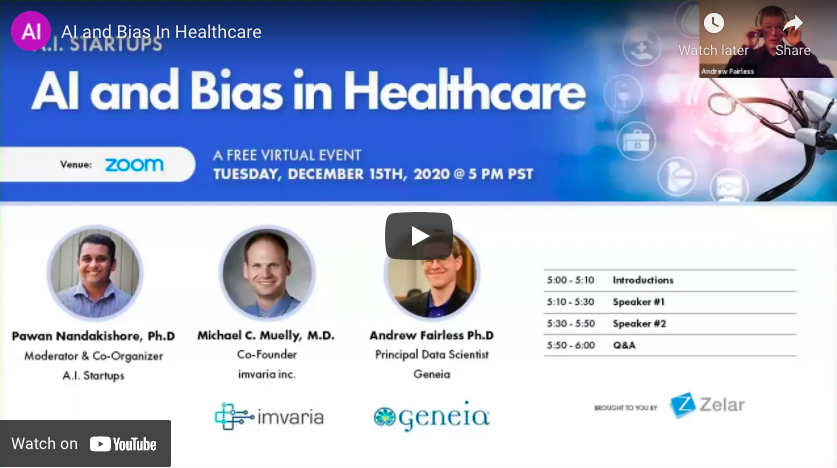

This guest panel series examines the use of AI in assisting healthcare, with a particular focus on automating tasks, communicating diagnoses and allocating resources. It examines the sources of bias in AI integrated systems and what we can do to eliminate it.

Read More

Abstract. The COVID-19 pandemic is presenting a disproportionate impact on minorities in terms of infection rate, hospitalizations, and mortality. In terms of infection rate, hospitalisation and mortality, the Covid-19 pandemic presents a disproportionate impact on minorities. Many believe that artificial intelligence could be a solution to guide clinical decision making to overcome this novel disease. […]

Read More

The complexity and rise of data in healthcare means that artificial intelligence (AI) will increasingly be applied within the field. Several types of AI are already being employed by payers and providers of care, and life sciences companies. The key categories … This report discusses current applications of AI as well as potential future applications […]

Read More

Artificial intelligence (AI) is providing opportunities to transform cardiovascular medicine. As the leading cause of morbidity and mortality worldwide, cardiovascular disease is prevalent across all populations, with clear benefit to operationalise clinical and biomedical data to improve workflow… Medical paper which examines the potential of Artificial Intelligence in cardiovascular medicine; it could hugely benefit patient […]

Read More

Medical devices employing AI stand to benefit everyone in society, but if left unchecked, the technologies could unintentionally perpetuate sex, gender and race biases. Medical devices utilising AI technologies stand to reduce general biases in the health care system, however, if left unchecked, the technologies could unintentionally perpetuate sex, gender, and race biases. The AI […]

Read More

Sweeping calculation suggests it could be — but how to fix the problem is unclear. An estimated one million black adults would be transferred earlier for kidney disease if US health systems removed a ‘race-based correction factor’ from an algorithm they use to diagnose people and decide whether to administer medication. There is a debate […]

Read More

Artificial intelligence in healthcare currently reflects the same racial and gender biases as the culture at large. Those prejudices are built into the data. AI technologies are being used to diagnose Alzheimer’s disease by assessing speech. This technology could aid early diagnosis of Alzheimer’s. However, it’s evident that the algorithms behind this technology are trained […]

Read More

Heralded as an easy fix for health services under pressure, data technology is marching ahead unchecked. But is there a risk it could compound inequalities? Poppy Noor investigates. Journalist Poppy Noor investigates how black people with melanoma are being underserved in healthcare, and the link to the racist algorithms driving new cancer software. Most of […]

Read More

Pulse oximeters give biased results for people with darker skin. The consequences could be serious. COVID-19 care has brought the pulse oximeter to the home, it’s a medical device that helps to understand your oxygen saturation levels. This article examines research that shows oximetry’s racial bias. Oximeters have been calibrated, tested and developed using light-skinned […]

Read More

Technology influences the way we eat, sleep, exercise, and perform our daily routines. But what to do when we discover the technology we rely on is built on faulty methodology and… Health monitoring devices influence the way that we eat, sleep, exercise, and perform our daily routines. But what do we do when we discover […]

Read More

The advent of AI promises to revolutionise the way we think about medicine and healthcare, but who do we hold accountable when automated procedures go awry? In this talk, Varoon focuses on the lack of affordable medicines within healthcare and the concerns over racial bias being brought into the healthcare system.

Read More

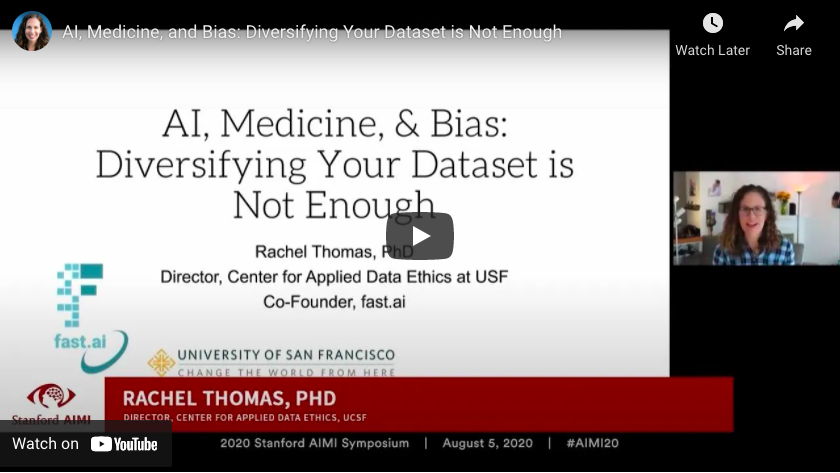

Using the example of machine learning in medicine as an example, Rachel Thomas examines examples of racial bias within the AI technologies driving modern-day medicines and treatments. Rachel Thomas argues that whilst the diversity of your data set, and performance of your model across different demographic groups is important, this is only a narrow slice […]

Read More