To increase public power in AI decision making, we must increase critical AI literacy

We and AI is a nonprofit volunteer social justice organisation supporting greater public input into if and how AI is used.

Our main areas of work are advocacy for critical AI literacy, literacy content design, development and delivery and guidance on developing responsible and inclusive discourse about AI.

Better Images of AI: Free library of realistic images of AI

Read more

Stock images strongly influence the way people think about the topics they illustrate. Research has repeatedly shown that many images of artificial intelligence are misleading and unhelpful. Coordinated by We and AI, Better Images of AI is a non-profit collaboration which runs a free library of more inclusive and transparent images that anyone can use, and a community blog exploring visual narratives of AI.

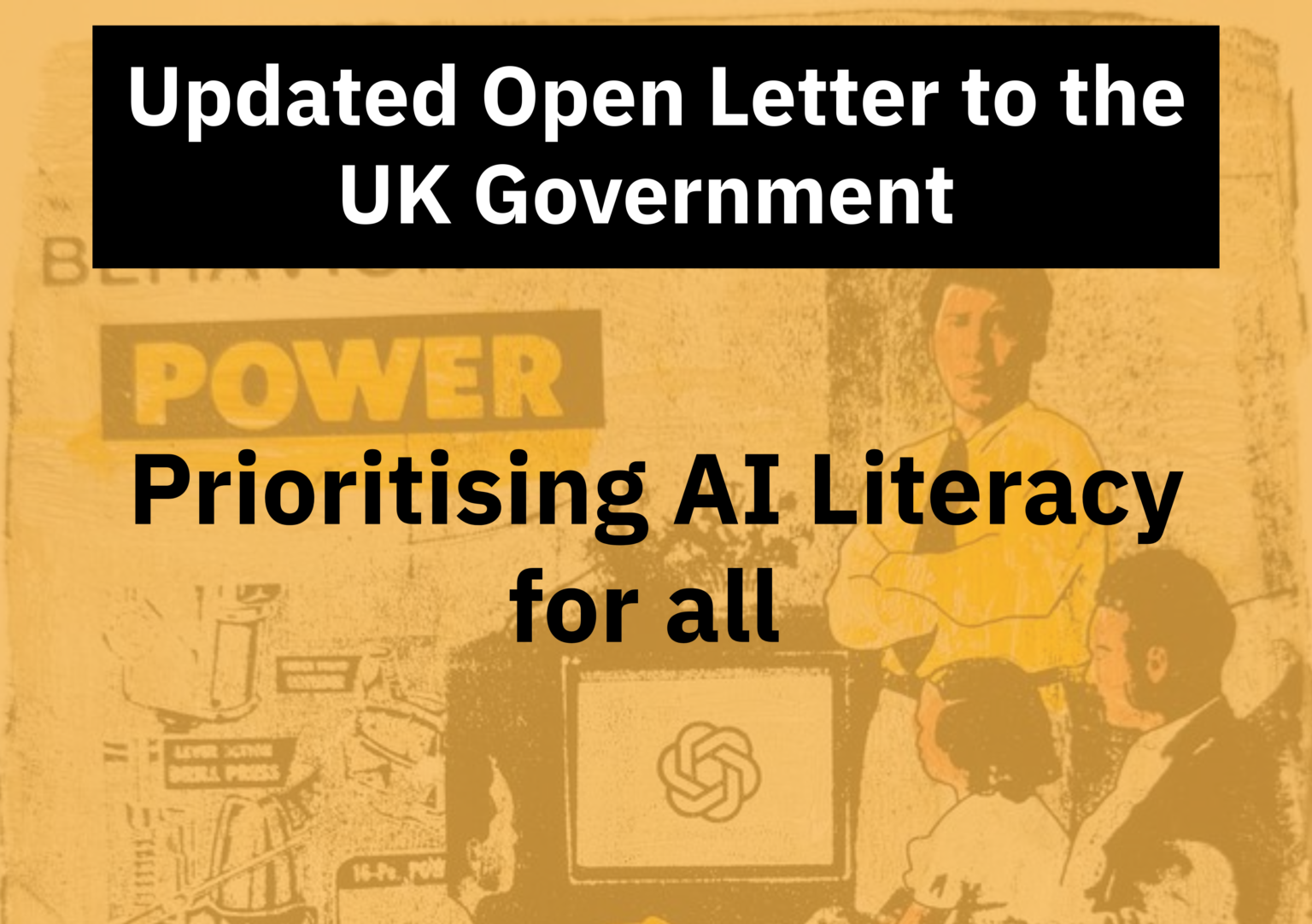

Advocacy: A call for public (critical) AI literacy in the UK

Read more

The UK Government recently announced the AI Skills Boost. It promised “free AI training for all,” and claimed that the courses will give people the skills needed to use AI tools effectively. We and AI have contributed to articles in Computer Weekly and Tech Policy Press highlighting the inadequacy of both the approach and the courses, and host an Open Letter calling for an investment not just in skills but public literacy.

Image: Zoya Yasmine / https://betterimagesofai.org / https://creativecommons.org/licenses/by/4.0/

Collection: Ethical implications of AI hype

Read more

We edited a Topical Collection of research articles in the AI and Ethics Journal to explore perspectives on the impact of AI Hype and its ethical implications. The articles in this collection examine the ways in which myths, misrepresentation, and overinflation associated with AI capabilities and performance can influence policy agendas, business decisions, and individuals. The authors of identify how mechanisms such as framing, linguistic devices, terminology, and representation can influence mental models and the trajectory of future developments relating to AI.

Image: Clarote & AI4Media / https://betterimagesofai.org / https://creativecommons.org/licenses/by/4.0/

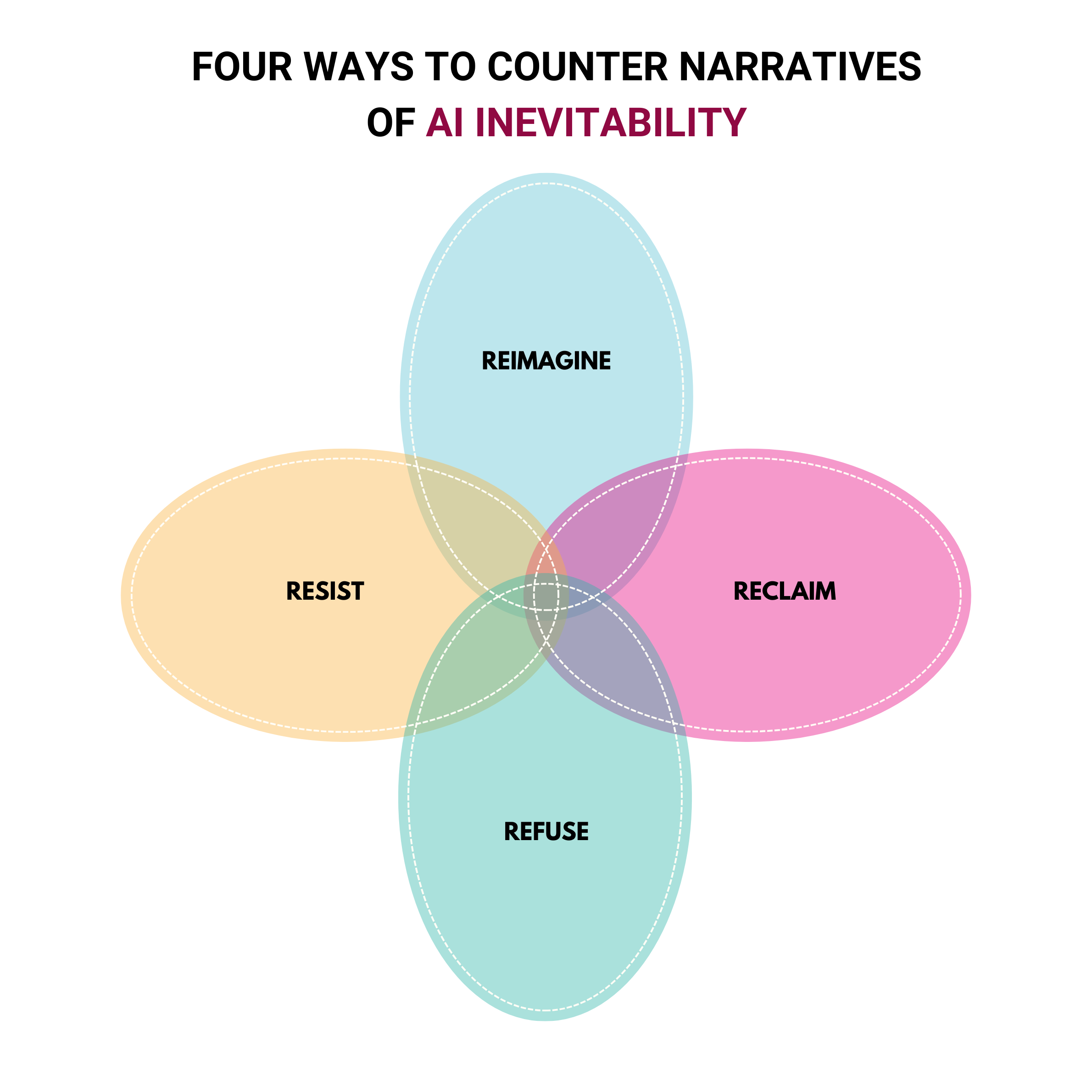

Framework: Challenge the inevitability of extractive AI

Read more

With soaring investments into AI technologies and infrastructure and unabating coverage of AI stories in the media, AI is widely perceived as inevitable. However, this perception is shaped by narratives that diminish our agency as humans and present technology as advancing independently from human activity and interests. In response we have created a framework which outlines instances of initiatives actively defying narratives about the inevitability of AI. It explores instances of Resisting, Refusing, Reimagining and/or Reclaiming AI and is a conceptual framework for challenging the power dynamics underpinning AI technologies, in a variety of different ways.

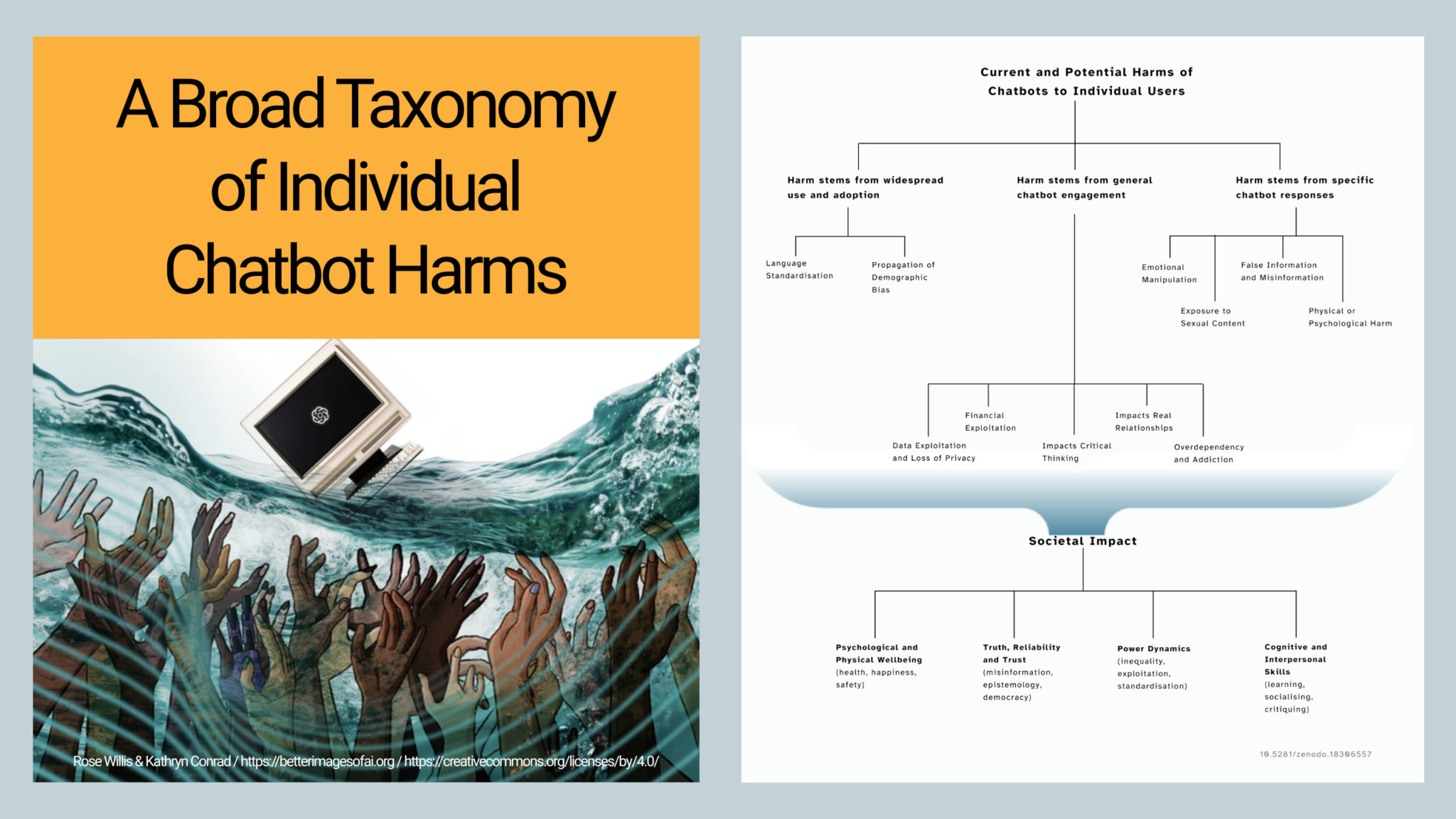

Research: a map of harms encountered by chatbot users

Read more

AI chatbots have been integrated into many aspects of daily life, often with little discussion or acknowledgement of the possible harms that this technology may cause. We conducted a narrative review of the current and potential harms that can be caused through interaction with AI chatbots, and identified and mapped 13 different types of harms to individual users, many which are still hidden.

Image: Hanna Barakat & Archival Images of AI + AIxDESIGN / https://betterimagesofai.org / https://creativecommons.org/licenses/by/4.0/

Exploring metaphors of AI

Read more

Better Images of AI and We and AI have been exploring the role of visual and narrative metaphors in shaping our understanding of AI. As part of this we invited some researchers who have been conducting different types of research into the topic, to shed light on the ways metaphors can contribute to hype specifically. This informs a project we are conducting on building an annotated AI metaphor database.

Image: Rick Payne and team / https://betterimagesofai.org / https://creativecommons.org/licenses/by/4.0/

Our mission is to enable the development of critical thinking skills about AI, particularly among those currently most underrepresented in AI decisions and data, and most vulnerable to the consequences of automation. Our focus is to develop accessible interventions to support people in navigating AI narratives and to make informed decisions which genuinely align with their values and interests.

Blog

-

The growing call for public (critical) AI literacy in the UK

Skills-based digital literacy has failed to provide public benefit. So why is upskilling the UK’s only plan? As part of a range of bombastic statements about the UK’s uncritical embracing of AI in everything, the Government recently announced the AI Skills Boost. It promised “free AI training for all,” and claimed that the courses will…

-

The Hidden Harms of Chatbot Use – Mapped

A broad taxonomy of AI chatbot harms caused to individual users Introduction At We and AI we have been concerned by the lack of awareness of the wide range of harms caused by AI chatbots for a long time. Despite the huge encouragement to use chatbots as companions, coworkers, doctors, teachers, counsellors, life advisors, friends,…

-

Review of “Exploring Metaphors of AI: Visualisations, Narratives and Perception”

A curated research session at the Hype Studies Conference, “(Don’t) Believe the Hype?!” 10-12 September 2025, Barcelona By Cinzia Pusceddu Better Images of AI and We and AI have been exploring the role of visual and narrative metaphors in shaping our understanding of AI. As part of this we invited some researchers who have been…

-

Resisting, refusing, reclaiming, reimagining AI: A new framework to challenge the inevitability of extractive AI

At We and AI we have been working on several projects based around decoding and unpicking the AI hype narratives, framing, words and pictures that are used to legitimise AI which does not work for public good. One narrative we have found to be most insidious is one used to disempower anyone who challenges the…

-

Exploring Community Visions of AI and Public Good through Critical AI Literacy

Sharing the learning from an intervention to gain deeper insights in public deliberation In late 2024, We and AI were commissioned by the Ada Lovelace Institute on their project ‘Making good’, to provide a critical AI literacy programme. Through deliberative engagement with communities in Belfast, Brixton and Southampton, the research explored how people feel about…

-

Three barriers to fostering critical AI literacies

We have been enjoying working with Professor Kathryn Conrad to define some key elements of critical AI literacy, including how it differs from AI and digital literacy, and why it is currently needed as an addition to AI literacy. There is a growing recognition of the need for ways of learning about AI that address…

Motivated by social justice and decoding the AI hype?

We would love to hear from you.