Filter resources by type or complexity

All AdvancedArticleBeginnerIntermediateReportResearch PaperVideoWhite Paper

Software that monitors students during tests perpetuates inequality and violates their privacy

In an opinion piece by a University Librarian, he claims that millions of algorithmically proctored (invigilated) tests are happening every month around the world, increasing exponentially during the pandemic. In his experience algorithmic ‘proctoring’ reinforces white supremacy, sexism, ableism, and transphobia, invades students’ privacy and is often a civil rights violation.

Read More

Remote testing monitored by AI is failing the students forced to undergo it

An opinion piece in which examples are given of students who have been highly disadvantaged by exam software, including a muslim woman forced to remove her hijab by software, in order to prove she is not hiding anything behind it.

Read More

Speech recognition in education: The powers and perils

Weighing up the huge potential of voice recognition technology to gain insights into children’s language and reading development, against a difference of 16% in misidentified words between white and black voices.

Read More

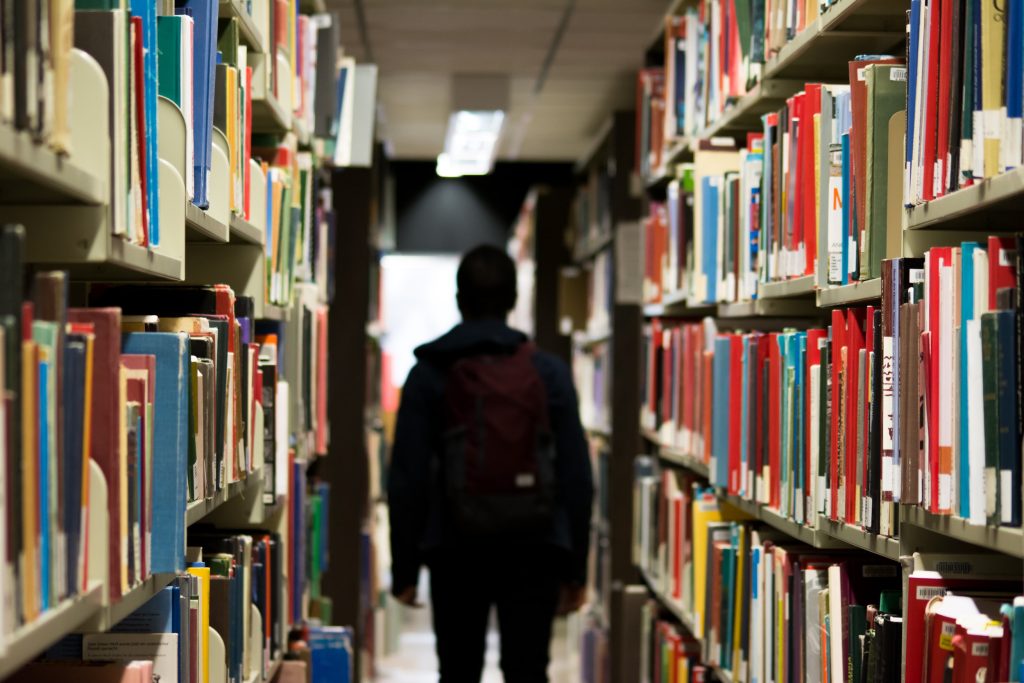

AI teachers may be the solution to our education crisis

This article looks at the global shortage of teachers and how AI might be used to supplement and provide lacking education, and argues that it could be less biased than teachers, thereby resolving inequity.

Read More

Can computers ever replace the classroom?

This article considers the various ways AI can be used during the pandemic to boost virtual learning, focusing on Chinese company Squirrel AI who are reporting good results with computer tutors and personalised learning, and weighing up the risks, such as surveillance of Muslim Uighurs in Xinjiang.

Read More

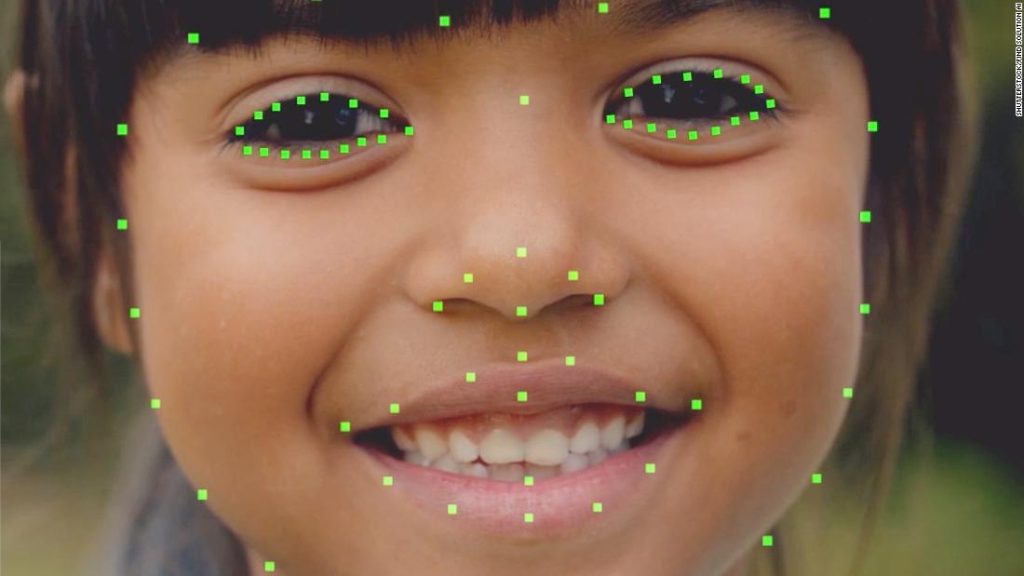

In Hong Kong, this AI Reads children’s emotions as they learn…

Facial recognition AI, combined with other AI assessment, is used to spot how children are performing and boost their performance. However, there is concern that it may not work so well for students with non-Chinese ethnicities who were not part of the training data.

Read More

AI is coming to schools, and if we are not careful, so will it’s biases

This article looks at what issues may arise for children from minority and underprivileged communities from replacing teachers with AI.

Read More

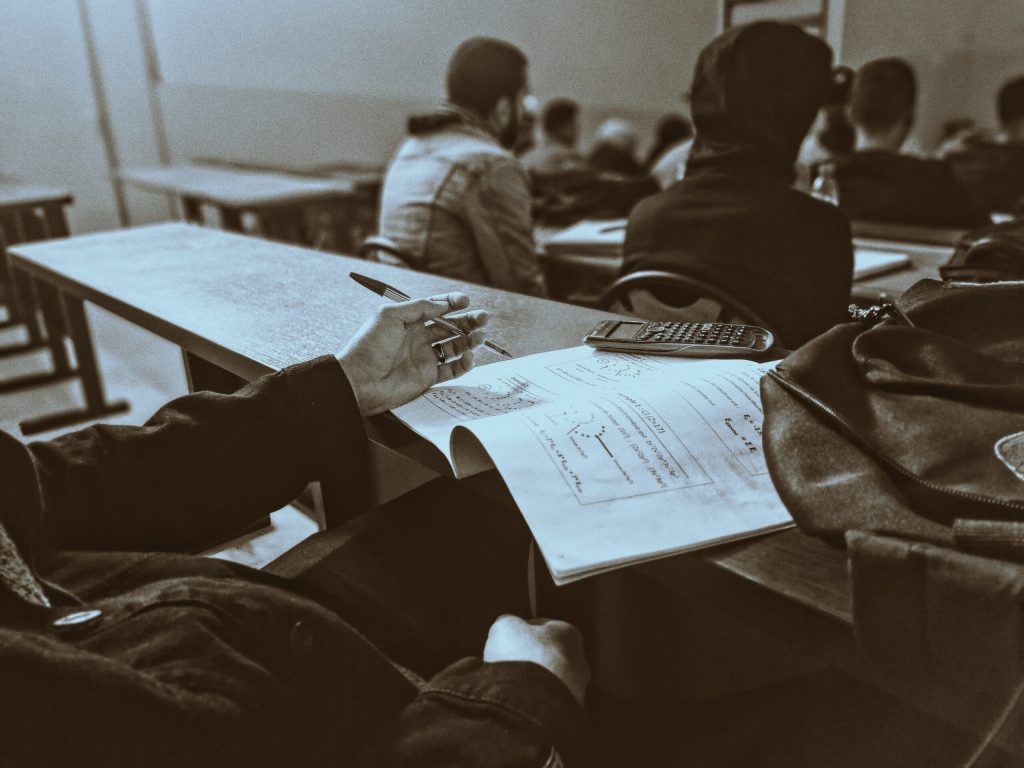

Flawed Algorithms are Grading Millions of Students Essays

Automated essay grading in the US has been shown to mark down African American students and those from other countries.

Read More

Student Predictions & Protections: Algorithm Bias in AI-Based Learning

This short article gives an example of how predictive algorithms can penalise underrepresented groups of people. In this example, students from Guam had their pass rate underestimated versus other nationalities, because of the low number of students in the data set used to build the prediction model, resulting in insufficient accuracy.

Read More

Inbuilt biases and the problem of algorithms

This article details the algorithm used to inform A Level results for students who could not take exams due to the 2020 pandemic. The algorithm took into account the postcode of the student, which meant that students from lower income areas were more likely to have their grade reduced whilst students in high-income areas were […]

Read More

Algorithms can drive inequality. Just look at Britain’s school exam chaos

An outcry over alleged algorithmic bias against pupils from more disadvantaged backgrounds has now left teenagers and experts alike calling for greater scrutiny of the technology.

Read More

Postcode or performance: How the A Level results of 2020 exposed a broken system

Case study explaining algorithm bias inherent in grade prediction for A Level students. Demonstrates the physical impact AI can have, if not scrutinised for bias.

Read More

The problem with algorithms: magnifying misbehaviour

This news example gives an example of bias present in an algorithm governing the first round of admissions into a medical university. The data used to define the algorithms output showed bias against both females and people with non-European-looking names.

Read More

How will artificial intelligence change admissions?

An article detailing how AI might change admissions in terms of the process, the consequences and how students from some countries could be at risk of bias.

Read More