Filter resources by type or complexity

All AdvancedArticleBeginnerCase StudyIntermediatePodcastProjectReportResearch PaperVideoWhite Paper

AI Bias May Worsen COVID-19 Health Disparities for People of Colour

A new article in the Journal of the American Medical Informatics Association points to the dissemination of “under-developed and potentially biased models” in response to the novel coronavirus. This article draws on recent medical research which shows how potentially biased models informing our health care systems have impacted COVID-19. These biased models could exacerbate the […]

Read More

Google Announces New AI App To Diagnose Skin Condititons

Earlier this week, Google announced the arrival of a new AI app to help diagnose skin conditions. It plans to launch it in Europe later this year. This article discusses mobile apps that aid the self-diagnosis of skin conditions. The apps do intend to be inclusive of all skin types, however, the training data was […]

Read More

Debiasing artificial intelligence: Stanford researchers call for efforts to ensure that AI technologies do not exacerbate health care disparities

Medical devices employing AI stand to benefit everyone in society, but if left unchecked, the technologies could unintentionally perpetuate sex, gender and race biases. Medical devices utilising AI technologies stand to reduce general biases in the health care system, however, if left unchecked, the technologies could unintentionally perpetuate sex, gender, and race biases. The AI […]

Read More

Is a racially biased algorithm delaying healthcare for one million black people?

Sweeping calculation suggests it could be — but how to fix the problem is unclear. An estimated one million black adults would be transferred earlier for kidney disease if US health systems removed a ‘race-based correction factor’ from an algorithm they use to diagnose people and decide whether to administer medication. There is a debate […]

Read More

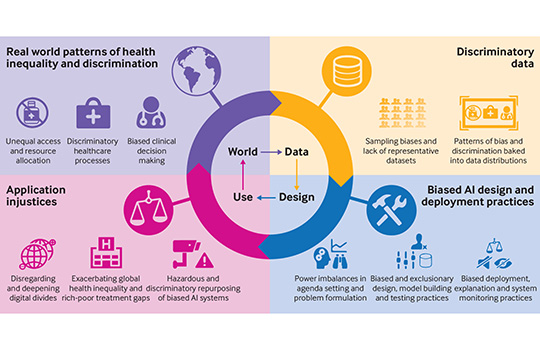

Does “AI” stand for augmenting inequality in the era of covid-19 healthcare?

Artificial intelligence can help tackle the covid-19 pandemic, but bias and discrimination in its design and deployment risk exacerbating existing health inequity argue David Leslie and colleagues. Among the most damaging characteristics of the covid-19 pandemic has been its disproportionate effect… A team of medical ethics researchers are arguing that bias and discrimination within AI […]

Read More

How medicine discriminates against non-white people and women

Many devices and treatments work less well for them This article explores how the pulse oximeter, a device used to test oxygen levels in blood for coronavirus patients, exhibits racial bias. Medical journals give evidence that pulse oximeters overestimated blood-oxygen saturation more frequently in black people than white.

Read More

How a Popular Medical Device Encodes Racial Bias

Pulse oximeters give biased results for people with darker skin. The consequences could be serious. COVID-19 care has brought the pulse oximeter to the home, it’s a medical device that helps to understand your oxygen saturation levels. This article examines research that shows oximetry’s racial bias. Oximeters have been calibrated, tested and developed using light-skinned […]

Read More

Skin Deep: Racial Bias in Wearable Tech

Technology influences the way we eat, sleep, exercise, and perform our daily routines. But what to do when we discover the technology we rely on is built on faulty methodology and… Health monitoring devices influence the way that we eat, sleep, exercise, and perform our daily routines. But what do we do when we discover […]

Read More

Fitbits and other wearables may not accurately track heart rates in people of colour

Many popular wearable heart rate trackers rely on technology that could be less accurate for consumers who have darker skin, researchers, engineers and other experts told STAT. An estimated 40 million people in the US alone have smartwatches or fitness trackers that can monitor heartbeats. However, some people of colour may be at risk of […]

Read More

Studies find bias in AI models that recommend treatments and diagnose diseases

New research shows that AI models designed for health care settings can exhibit bias against certain ethnic and gender groups. Machine learning models for healthcare hold promise in improving medical treatments by improving predictions of care and mortality, however their black box nature, and bias in training data sets leaves them vulnerable to instead hinder […]

Read More

Does “AI” stand for augmenting inequality in the era of covid-19 healthcare?

Artificial intelligence can help tackle the covid-19 pandemic, but bias and discrimination in its design and deployment risk exacerbating existing health inequity argue David Leslie and colleagues Among the most damaging characteristics of the covid-19 pandemic has been its disproportionate effect… A team of medical ethics researchers are arguing that bias and discrimination within AI […]

Read More

‘Objective’ Science and White Bias: BAME Under-Representation in Biomedical Research (Part 2)

By Amber Roguski. This is the second post in a two-part blog series. It explores the under-representation of Minority Ethnic individuals as participants in biomedical research. This article explores racial bias and exclusion within biomedical research. White People are 87% more likely to be included in medical research than people from a Minority Ethnic Background, […]

Read More

Understanding AI bias in banking

As banks invest in AI solutions, they must also explore how AI bias impacts customers and understand the right and wrong ways to approach it. AI systems could unfairly decline new bank account applications, block payments and credit cards, deny loans, and other vital financial services and products to qualified customers because of how their […]

Read More

Racial Justice: Decode the Default (2020 Internet Health Report)

Technology has never been colourblind. It’s time to abolish notions of “universal” users of software. This is an overview on racial justice in tech and in AI that considers how systemic change must happen for technology to be support equity.

Read More

Racist Robots? How AI bias may put financial firms at risk

Artificial intelligence (AI) is making rapid inroads into many aspects of our financial lives. Algorithms are being deployed to identify fraud, make trading decisions, recommend banking products, and evaluate loan applications. This is helping to reduce the costs of financial products and improve th… Through a case study of mortgage applications, this article shows how […]

Read More

AI risks replicating tech’s ethnic minority bias across business

Diverse workforce essential to combat new danger of ‘bias in, bias out’ This short article looks at the link between the lack of diversity in the AI workforce and the bias against ethnic minorities within financial services – the “new danger of ‘bias in, bias out’”.

Read More

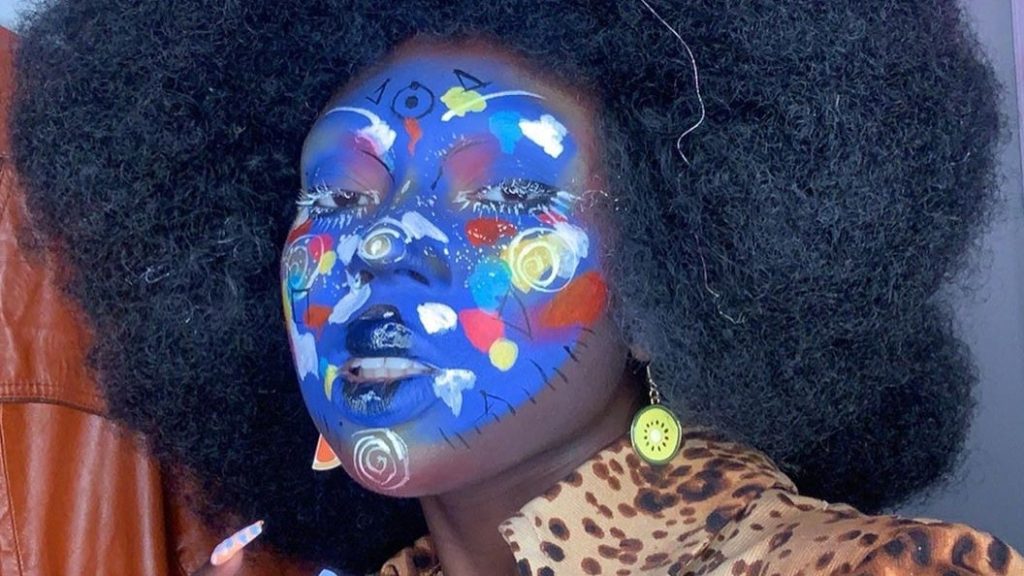

Can make-up be an anti-surveillance tool?

As protests against police brutality and in support of the Black Lives Matter movement continue in the wake of George Floyd’s killing, protection against mass surveillance has become top of mind. This article explains how make-up can be used both as a way to evade facial recognition systems, but also as an art form.

Read More

Machine Bias – There’s software used across the country to predict future criminals and it’s biased against blacks

There’s software used across the country to predict future criminals. And it’s biased against blacks. This is an article detailing a software which is used to predict the likelihood of recurring criminality. It uses case studies to demonstrate the racial bias prevalent in the software used to predict the ‘risk’ of further crimes. Even for […]

Read More

Another arrest, and jail time, due to a bad facial recognition match

A New Jersey man was accused of shoplifting and trying to hit an officer with a car. He is the third known black man to be wrongfully arrested based on face recognition.

Read More

Algorithms and bias: What lenders need to know

Much of the software now revolutionising the financial services industry depends on algorithms that apply artificial intelligence (AI) – and increasingly, machine learning – to automate everything from simple, rote tasks to activities requiring sophisticated judgment. Explains (from a US perspective) how the development of machine learning and algorithms has left financial services at risk […]

Read More

AI Perpetuating Human Bias in the Lending Space

AI was supposed to be the pinnacle of technological achievement — a chance to sit back and let the robots do the work. While it’s true AI completes complex tasks and calculations faster and more accurately than any human could, it’s shaping up to need some supervision. There is data which predicts that the introduction […]

Read More

Google hired Timnit Gebru to be an outspoken critic of unethical AI. Then she was fired for it.

Timnit Gebru and Google Timnit Gebru is one of the most high-profile Black women in her field and a powerful voice in the new field of ethical AI, which seeks to identify issues around bias, fairness, and responsibility. Google hired her, then fired her. This article argues that leading AI ethics researchers, such as Timnit […]

Read More

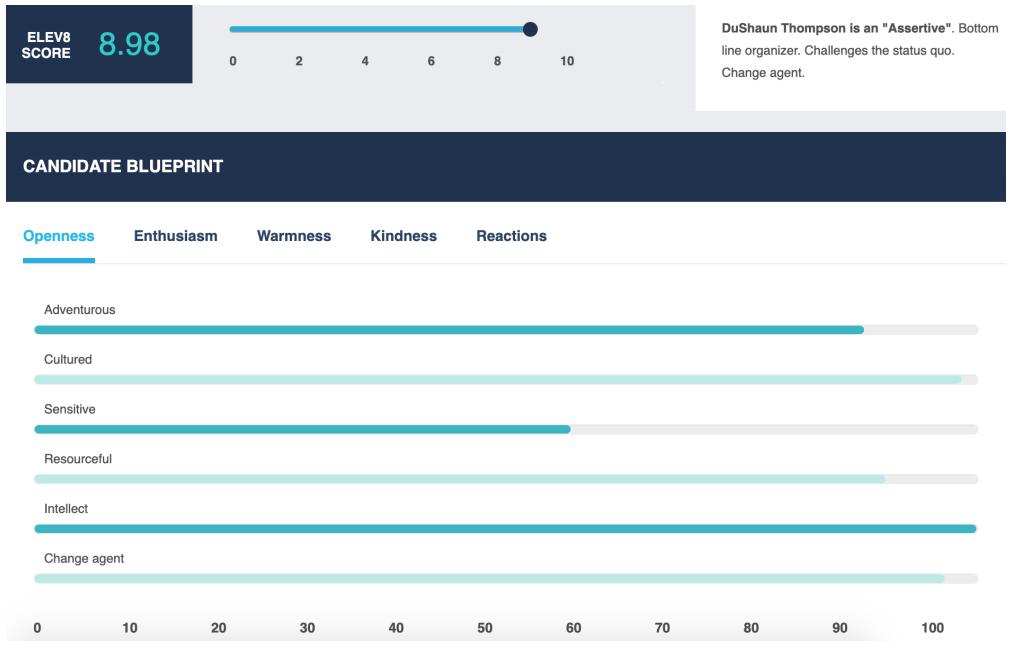

Face-scanning algorithm increasingly decides whether you deserve the job

HireVue claims it uses artificial intelligence to decide who’s best for a job. Outside experts call it “profoundly disturbing.” An article about HireVue’s “AI-driven assessments”. More than 100 employers now use the system, including Hilton and Unilever, and more than a million job seekers have been analysed.

Read More

How to use AI hiring tools to reduce bias in recruiting

From machine learning tools that optimize job descriptions, to AI-powered psychological assessments of traits like fairness, here’s a look at the strengths – and pitfalls – of AI Commercial AI recruitment systems cannot always be trusted to be effective in doing what the vendors say they do. The technical capabilities this type of software offers […]

Read More

Using AI to eliminate bias from hiring

AI could eliminate unconscious bias and sort through candidates in a fair way. Many current AI tools for recruiting have flaws, but they can be addressed. The beauty of AI is that we can design it to meet certain beneficial specifications. A movement among AI practitioners like OpenAI and the Future of Life Institute is […]

Read More

Rights group files federal complaint against AI-hiring firm HireVue, citing ‘unfair and deceptive’ practices

AI is now being used to shortlist job applicants in the UK — let’s hope it’s not racist AI-based video interviewing software such as those made by HireVue are being used by companies for the first time in job interviews in the UK to shortlist the best job applicants. HireVue’s “AI-driven assessments,” which more than […]

Read More

In the Covid-19 jobs market, biased AI is in charge of all the hiring

As millions of people flood the jobs market, companies are turning to biased and racist AI to sift through the avalanche of CVs As millions of people flood the jobs market, companies are turning to “biased and racist” AI to sift through the avalanche of CVs. This can lock certain groups out of employment and […]

Read More

These robots handle dull and dangerous work humans do today — and can create new jobs

Avidbots, iUNU and 6 River Systems are among the start-ups on CNBC’s Upstart 100 list that are putting robots to work doing “dull, dirty and dangerous” tasks previously handled by humans. This article highlights the benefits of using AI and robots in certain work situations.

Read More

PwC facial recognition tool criticised for home working privacy invasion

Accounting giant PwC has come under fire for the development of a facial recognition tool that logs when employees are absent from their computer screens while they work from home. The technology, which is being developed specifically for financial institutions, recognises the faces of workers via t… PwC has come under fire for the development […]

Read More

AI will impact future of jobs

Will AI eliminate more jobs than it creates? Experts weigh in on a hot topic that impacts almost every industry. The impact of AI on future jobs.

Read More

An AI expert told ’60 Minutes’ that AI could replace 40% of jobs

Artificial intelligence can replace repetitive tasks, but it doesn’t have the empathy to lead. View of an AI expert on human job loss. Provides an anecdotal view from an AI expert on what jobs are already being displaced with AI and automation.

Read More

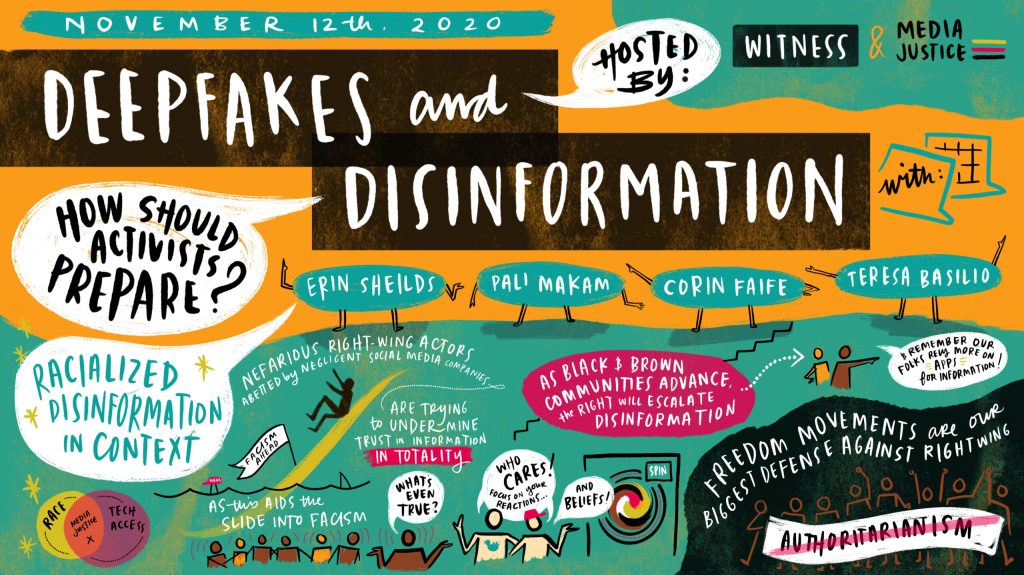

Deepfakes and Disinformation

Earlier this month, in the aftermath of a decisive yet contested election, MediaJustice, in partnership with MediaJustice Network member WITNESS, brought together nearly 30 civil society groups, researchers, journalists, and organizers to discuss the impacts visual disinformation has had on institutions and information systems. Deepfakes and disinformation can be used in used in racialised disinformation […]

Read More

Software that monitors students during tests perpetuates inequality and violates their privacy

In an opinion piece by a University Librarian, he claims that millions of algorithmically proctored (invigilated) tests are happening every month around the world, increasing exponentially during the pandemic. In his experience algorithmic ‘proctoring’ reinforces white supremacy, sexism, ableism, and transphobia, invades students’ privacy and is often a civil rights violation.

Read More

Remote testing monitored by AI is failing the students forced to undergo it

An opinion piece in which examples are given of students who have been highly disadvantaged by exam software, including a muslim woman forced to remove her hijab by software, in order to prove she is not hiding anything behind it.

Read More

Speech recognition in education: The powers and perils

Weighing up the huge potential of voice recognition technology to gain insights into children’s language and reading development, against a difference of 16% in misidentified words between white and black voices.

Read More

AI teachers may be the solution to our education crisis

This article looks at the global shortage of teachers and how AI might be used to supplement and provide lacking education, and argues that it could be less biased than teachers, thereby resolving inequity.

Read More

Can computers ever replace the classroom?

This article considers the various ways AI can be used during the pandemic to boost virtual learning, focusing on Chinese company Squirrel AI who are reporting good results with computer tutors and personalised learning, and weighing up the risks, such as surveillance of Muslim Uighurs in Xinjiang.

Read More

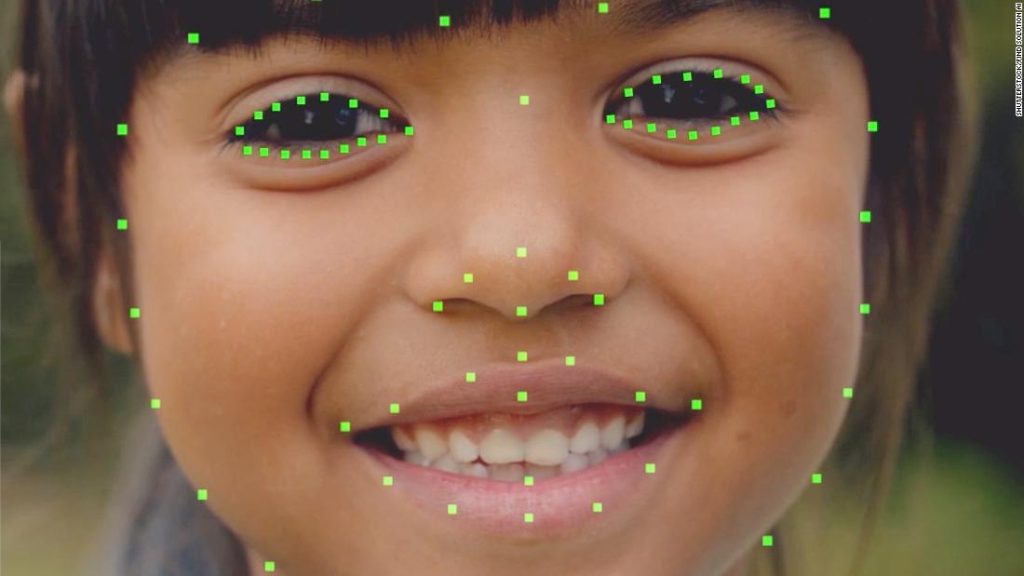

In Hong Kong, this AI Reads children’s emotions as they learn…

Facial recognition AI, combined with other AI assessment, is used to spot how children are performing and boost their performance. However, there is concern that it may not work so well for students with non-Chinese ethnicities who were not part of the training data.

Read More

AI is coming to schools, and if we are not careful, so will it’s biases

This article looks at what issues may arise for children from minority and underprivileged communities from replacing teachers with AI.

Read More

Flawed Algorithms are Grading Millions of Students Essays

Automated essay grading in the US has been shown to mark down African American students and those from other countries.

Read More

Student Predictions & Protections: Algorithm Bias in AI-Based Learning

This short article gives an example of how predictive algorithms can penalise underrepresented groups of people. In this example, students from Guam had their pass rate underestimated versus other nationalities, because of the low number of students in the data set used to build the prediction model, resulting in insufficient accuracy.

Read More

Inbuilt biases and the problem of algorithms

This article details the algorithm used to inform A Level results for students who could not take exams due to the 2020 pandemic. The algorithm took into account the postcode of the student, which meant that students from lower income areas were more likely to have their grade reduced whilst students in high-income areas were […]

Read More

Algorithms can drive inequality. Just look at Britain’s school exam chaos

An outcry over alleged algorithmic bias against pupils from more disadvantaged backgrounds has now left teenagers and experts alike calling for greater scrutiny of the technology.

Read More

Postcode or performance: How the A Level results of 2020 exposed a broken system

Case study explaining algorithm bias inherent in grade prediction for A Level students. Demonstrates the physical impact AI can have, if not scrutinised for bias.

Read More

The problem with algorithms: magnifying misbehaviour

This news example gives an example of bias present in an algorithm governing the first round of admissions into a medical university. The data used to define the algorithms output showed bias against both females and people with non-European-looking names.

Read More

How will artificial intelligence change admissions?

An article detailing how AI might change admissions in terms of the process, the consequences and how students from some countries could be at risk of bias.

Read More

AI and Climate Change; The Promise, the Perils and Pillars for Action

The paper provides pillars of action for the AI community, and includes a focus on climate justice where the author recommends that environmental impacts should not be externalised onto the most marginalised populations, and that the gains are not only captured by digitally mature countries in the global north. This will require centring front-line communities […]

Read More

Apprise: Using AI to unmask situations of forced labour and human trafficking

The creators of the Apprise app share how they created a system assist workers in Thailand to avoid vulnerable situations. Forced labour exploiters continually tweak and refine their own practices of exploitation, in response to changing policies and practices of inspections.The article showcases efforts to create AI tools that predict changing patterns of human exploitation. […]

Read More

AI can be sexist and racist — it’s time to make it fair

Computer scientists must identify sources of bias, de-bias training data and develop artificial-intelligence algorithms that are robust to skews in the data. The article raises the challenge of defining fairness when building databases. For example, should the data be representative of the world as it is, or of a world that many would aspire to? […]

Read More

Establishing an AI code of ethics will be harder than people think

Over the past six years, the New York City police department has compiled a massive database containing the names and personal details of at least 17,500 individuals it believes to be involved in criminal gangs. The effort has already been criticized by civil rights activists who say it is inaccurat… The New York police department has […]

Read More

We tested Europe’s new lie detector for travellers – and immediately triggered a false positive

4.5 million euros have been pumped into the virtual policeman project meant to judge the honesty of travelers. An expert calls the technology “not credible.” IBorderCtrl’s lie detection system was developed in England by researchers at Manchester Metropolitan University. It claims that its virtual cop can detect deception by picking on the micro gestures the […]

Read More

Adjudicating by Algorithm, Regulating by Robot

Sophisticated computational techniques, known as machine-learning algorithms, increasingly underpin advances in business practices, from investment banking to product marketing and self-driving cars. Machine learning—the foundation of artificial intelligence—portends vast changes to the private sect… This article highlights the benefits of artificial intelligence in adjudication and making law in terms of improving accuracy, reducing human biases […]

Read More

Artificial intelligence in the courtroom

The impact of AI on litigation. The current use of AI in reviewing documents, predicting outcome of cases and predicting success rates for lawyers. This article highlights concerns about fallibility and the need of human oversight.

Read More

How AI is impacting the UK’s legal sector

We examine the impact of artificial intelligence on the UK’s legal sector

Read More

Six ways the legal sector is using AI right now

Law Society partner and equity crowdfunding platfrom Seedrs explains how developments within AI are taking law firms and solicitors to the next level. A article on how AI can be used in adjudication and law in general. It highlights that although AI has vast potential, there is not a broad adoption so far.

Read More

Facial recognition could stop terrorists before they act

In their zeal and earnest desire to protect individual privacy, policymakers run the risk of stifling innovation. The author makes the case that using facial recognition to prevent terrorism is justified as our world is becoming more dangerous every day; hence, policymakers should err on the side of public safety.

Read More

Is police use of face recognition now illegal in the UK?)

The UK Court of Appeal has determined that the use of a face-recognition system by South Wales Police was “unlawful”, which could have ramifications for the widespread use of such technology across the UK. The UK Court of Appeal unanimously decided against a face-recognition system used by South Wales Police.

Read More

UK police adopting facial recognition, predictive policing without public consultation

UK police forces are largely adopting AI technologies, in particular facial recognition and predictive policing, without public consultation. This article alerts about UK police using facial recognition and predictive policing without conducting public consultations. It also calls for transparency and input from the public about how those technologies are being used.

Read More

The algorithms that detect hate speech online are biased against black people

The algorithms that detect hate speech online are biased against black people A new study shows that leading AI models are 1.5 times more likely to flag tweets written by African Americans as “offensive” compared to other tweets.

Read More

Abolish the #TechToPrisonPipeline

Abolish the #TechToPrisonPipeline Crime-prediction technology reproduces injustices & causes real harm. The open letter highlights why crime predicting technologies tend to be inherently racist.

Read More

AI researchers say scientific publishers help perpetuate racist algorithms

AI researchers say scientific publishers help perpetuate racist algorithms The news: An open letter from a growing coalition of AI researchers is calling out scientific publisher Springer Nature for a conference paper it reportedly planned to include in its forthcoming book Transactions on Computational Science & Computational Intelligence. The paper, titled “A Deep Neural… Crime […]

Read More

Google think tank’s report on white supremacy says little about YouTube’s role in people driven to extremism

A Google-funded report examines the relationship between white supremacists and the internet, but it makes scant reference—all of it positive—to YouTube, the company’s platform that many experts blame more than any other for driving people to extremism. YouTube’s algorithm has been found to direct users to extreme content, sucking them into violent ideologies.

Read More

Is Facebook Doing Enough To Stop Racial Bias In AI?

After recently announcing Equity and Inclusion teams to investigate racial bias across their platforms, and undergoing a global advertising boycott over alleged racial discrimination, is Facebook doing enough to tackle racial bias? Disinformation driven via bots that game the AI systems of social media platforms to reinforce racial myths and attitudes as well as the […]

Read More

AI biased new media generation

Yeah, great start after sacking human hacks: Microsoft’s AI-powered news portal mixes up photos of women-of-color in article about racism. Blame Reg Bot 9000 for any blunders in this story, by the way News media is being automated and generated by AI which can incur bias from data sets for text generation.

Read More

Twitter image cropping

Another reminder that bias, testing, diversity is needed in machine learning: Twitter’s image-crop AI may favour white men, women’s chests Digital imagery favours white people in framing and de-emphasises the visibility of non-white people. Strange, it didn’t show up during development, says social network

Read More

Google Cloud’s image tagging AI

Google Cloud’s AI recog code ‘biased’ against black people – and more from ML land Including: Yes, that nightmare smart toilet that photographs you mid… er, process Digital imagery tagging provides negative context for non white people.

Read More

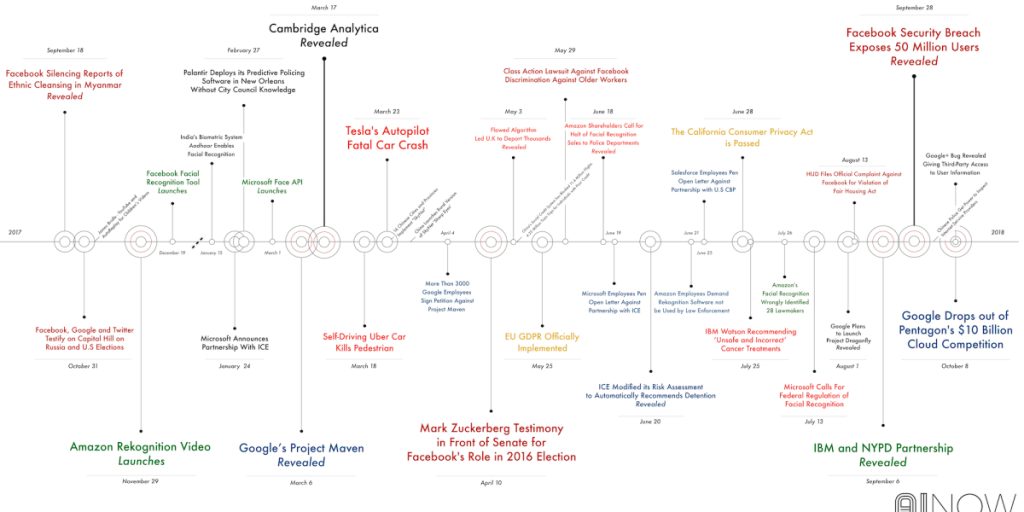

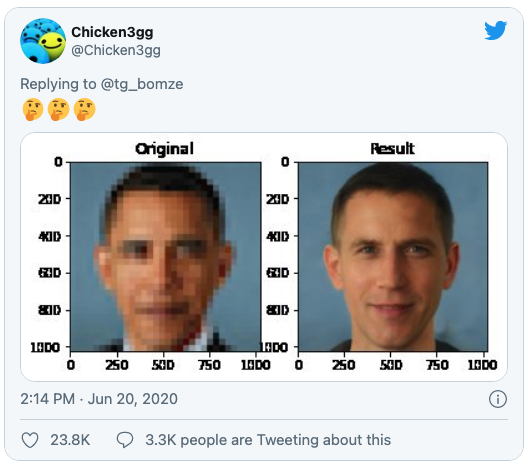

Image processing

Once again, racial biases show up in AI image databases, this time turning Barack Obama white Researchers used a pre-trained off-the-shelf model from Nvidia. Digital imagery tagging provides negative context for non white people.

Read More