Filter resources by type or complexity

All AdvancedArticleBeginnerCase StudyIntermediatePodcastProjectReportResearch PaperVideoWhite PaperInfluence of Skin Type and Wavelength on Light Wave Reflectance

The intent of the study was to determine the influence of skin type and wavelength on light reflectance for pulse rate detection. PPG sensors detecting changes in blood flow are assessed for effectiveness on dark and light skin tones. Studies have shown that green light lacks precision and accuracy, and may not read at all […]

Read More

Accuracy in Wrist-Worn, Sensor-Based Measurements of Heart Rate and Energy Expenditure in a Diverse Cohort

The ability to measure physical activity through wrist-worn devices provides an opportunity for cardiovascular medicine. However, the accuracy of commercial devices is largely unknown. The aim of this work is to assess the accuracy of seven commercially available wrist-worn devices in estimating hea… This research paper assesses the accuracy of seven commercially available wrist-worn devices, […]

Read More

Millions of black people affected by racial bias in healthcare algorithms

Study reveals rampant racism in decision-making software used by US hospitals — and highlights ways to correct it. An algorithm widely used in US hospitals to allocate health care to patients has been systematically discriminating against black people, a sweeping analysis has found. The study concluded that the algorithm was less likely to refer black […]

Read More

The Health Pulse: AI Bias in Healthcare

Show Analytics Exchange: Podcasts from SAS, Ep The Health Pulse: AI and Bias in Healthcare – Mar 5, 2021 Data scientist Hiwot Tesfaye joins Greg for a conversation about the use of algorithms in healthcare and how models can introduce bias. They’ll discuss current examples of health care bias, who should be held responsible and […]

Read More

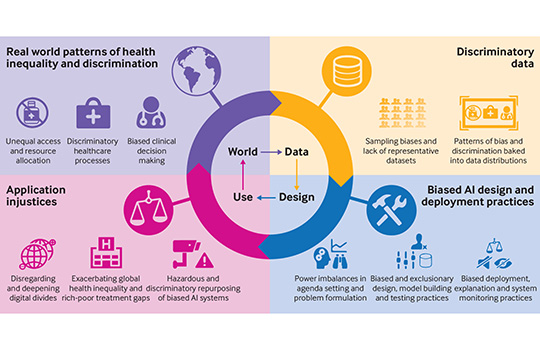

Bias and Discrimination in Healthcare AI Models

AI is helping healthcare organisations determine care management programs and treatment plans – who gets what care – but these models and algorithms can be biased and introduce discrimination in the allocation or denial of care.

Read More

AI and Bias in Healthcare

This guest panel series examines the use of AI in assisting healthcare, with a particular focus on automating tasks, communicating diagnoses and allocating resources. It examines the sources of bias in AI integrated systems and what we can do to eliminate it.

Read More

Use of AI-based tools for healthcare purposes: a survey study from consumers’ perspectives

This study sheds more light on factors affecting perceived risks and proposes some recommendations on how to practically reduce these concerns. The findings of this study provide implications for research and practice in the area of AI-based CDS. Regulatory agencies, in cooperation with healthcare… This paper examines AI-based tools for healthcare from a consumer’s perspective. […]

Read More

Understanding Racial Bias in Medical AI Training Data

By Adriana Krasniansky Interest in artificially intelligent (AI) health care has grown at an astounding pace: the global AI health care market is expected to reach $17.8 billion by 2025 and AI-powered systems are being designed to support medical activities ranging from patient diagnosis and triagin… AI-powered systems are being designed to support medical activities […]

Read More

Bias at warp speed: how AI may contribute to the disparities gap in the time of COVID-19

Abstract. The COVID-19 pandemic is presenting a disproportionate impact on minorities in terms of infection rate, hospitalizations, and mortality. In terms of infection rate, hospitalisation and mortality, the Covid-19 pandemic presents a disproportionate impact on minorities. Many believe that artificial intelligence could be a solution to guide clinical decision making to overcome this novel disease. […]

Read More

AI Bias May Worsen COVID-19 Health Disparities for People of Colour

A new article in the Journal of the American Medical Informatics Association points to the dissemination of “under-developed and potentially biased models” in response to the novel coronavirus. This article draws on recent medical research which shows how potentially biased models informing our health care systems have impacted COVID-19. These biased models could exacerbate the […]

Read More

Google Announces New AI App To Diagnose Skin Condititons

Earlier this week, Google announced the arrival of a new AI app to help diagnose skin conditions. It plans to launch it in Europe later this year. This article discusses mobile apps that aid the self-diagnosis of skin conditions. The apps do intend to be inclusive of all skin types, however, the training data was […]

Read More

The Potential for AI in healthcare

The complexity and rise of data in healthcare means that artificial intelligence (AI) will increasingly be applied within the field. Several types of AI are already being employed by payers and providers of care, and life sciences companies. The key categories … This report discusses current applications of AI as well as potential future applications […]

Read More

Addressing Bias: Artificial Intelligence in Cardiovascular Medicine

Artificial intelligence (AI) is providing opportunities to transform cardiovascular medicine. As the leading cause of morbidity and mortality worldwide, cardiovascular disease is prevalent across all populations, with clear benefit to operationalise clinical and biomedical data to improve workflow… Medical paper which examines the potential of Artificial Intelligence in cardiovascular medicine; it could hugely benefit patient […]

Read More

Debiasing artificial intelligence: Stanford researchers call for efforts to ensure that AI technologies do not exacerbate health care disparities

Medical devices employing AI stand to benefit everyone in society, but if left unchecked, the technologies could unintentionally perpetuate sex, gender and race biases. Medical devices utilising AI technologies stand to reduce general biases in the health care system, however, if left unchecked, the technologies could unintentionally perpetuate sex, gender, and race biases. The AI […]

Read More

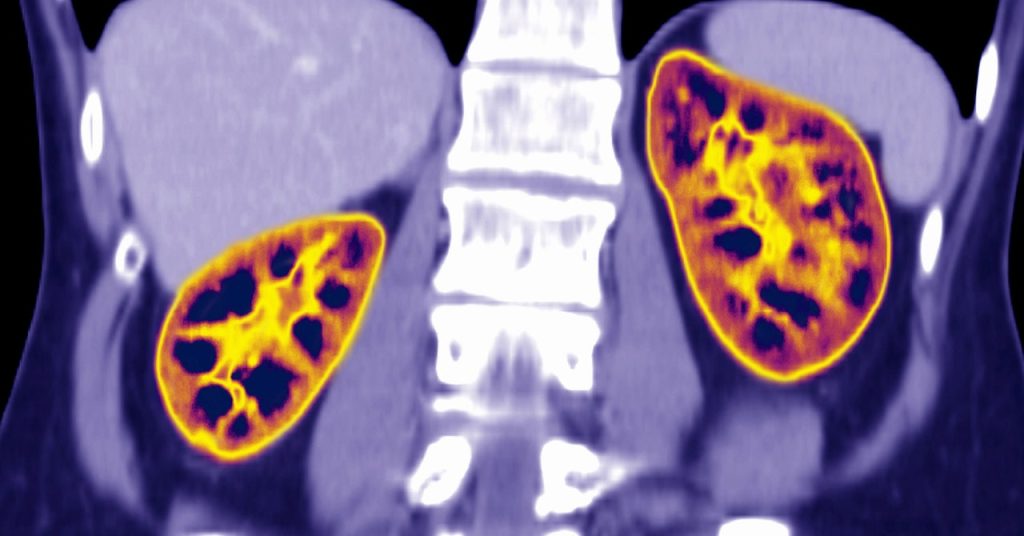

Is a racially biased algorithm delaying healthcare for one million black people?

Sweeping calculation suggests it could be — but how to fix the problem is unclear. An estimated one million black adults would be transferred earlier for kidney disease if US health systems removed a ‘race-based correction factor’ from an algorithm they use to diagnose people and decide whether to administer medication. There is a debate […]

Read More

If AI is going to be the world’s doctor, it needs better textbooks

Artificial intelligence in healthcare currently reflects the same racial and gender biases as the culture at large. Those prejudices are built into the data. AI technologies are being used to diagnose Alzheimer’s disease by assessing speech. This technology could aid early diagnosis of Alzheimer’s. However, it’s evident that the algorithms behind this technology are trained […]

Read More

Understanding Racial Bias in Medical AI Training Data

By Adriana Krasniansky Interest in artificially intelligent (AI) health care has grown at an astounding pace: the global AI health care market is expected to reach $17.8 billion by 2025 and AI-powered systems are being designed to support medical activities ranging from patient diagnosis and… AI-powered systems are being designed to support medical activities ranging […]

Read More

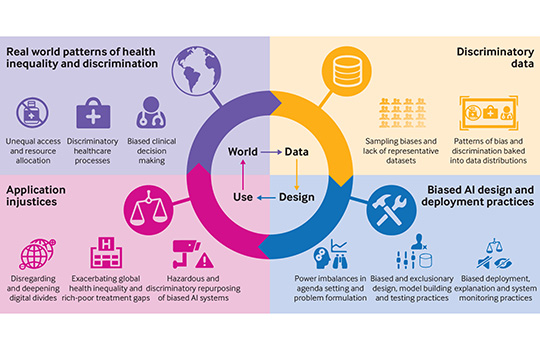

Does “AI” stand for augmenting inequality in the era of covid-19 healthcare?

Artificial intelligence can help tackle the covid-19 pandemic, but bias and discrimination in its design and deployment risk exacerbating existing health inequity argue David Leslie and colleagues. Among the most damaging characteristics of the covid-19 pandemic has been its disproportionate effect… A team of medical ethics researchers are arguing that bias and discrimination within AI […]

Read More

The Challenge of AI Bias and Diverse Healthcare Data

The double-edged sword of AI with bias; on the one hand it could treat every patient objectively and reduce bias, and on the other could impact certain patient populations adversely by using non-representative data. This video examines the potential of AI in reducing biases within medical diagnosis, by using AI technologies to understand how diseases […]

Read More

Can we trust AI not to further embed racial bias and prejudice?

Heralded as an easy fix for health services under pressure, data technology is marching ahead unchecked. But is there a risk it could compound inequalities? Poppy Noor investigates. Journalist Poppy Noor investigates how black people with melanoma are being underserved in healthcare, and the link to the racist algorithms driving new cancer software. Most of […]

Read More

AI-Driven Dermatology Could Leave Dark-Skinned Patients Behind

AI technologies have the potential to save thousands of people from skin cancer yearly, by aiding early diagnosis. However, this shift is also potentially dangerous for darker-skinned patients, as the demographic imbalances in dermatology can leave machine learning diagnoses less effective for darker-skinned patients.

Read More

How medicine discriminates against non-white people and women

Many devices and treatments work less well for them This article explores how the pulse oximeter, a device used to test oxygen levels in blood for coronavirus patients, exhibits racial bias. Medical journals give evidence that pulse oximeters overestimated blood-oxygen saturation more frequently in black people than white.

Read More

How a Popular Medical Device Encodes Racial Bias

Pulse oximeters give biased results for people with darker skin. The consequences could be serious. COVID-19 care has brought the pulse oximeter to the home, it’s a medical device that helps to understand your oxygen saturation levels. This article examines research that shows oximetry’s racial bias. Oximeters have been calibrated, tested and developed using light-skinned […]

Read More

Skin Deep: Racial Bias in Wearable Tech

Technology influences the way we eat, sleep, exercise, and perform our daily routines. But what to do when we discover the technology we rely on is built on faulty methodology and… Health monitoring devices influence the way that we eat, sleep, exercise, and perform our daily routines. But what do we do when we discover […]

Read More

Fitbits and other wearables may not accurately track heart rates in people of colour

Many popular wearable heart rate trackers rely on technology that could be less accurate for consumers who have darker skin, researchers, engineers and other experts told STAT. An estimated 40 million people in the US alone have smartwatches or fitness trackers that can monitor heartbeats. However, some people of colour may be at risk of […]

Read More

How an Algorithm Blocked Kidney Transplants to Black Patients

An algorithm used in the US supposed to estimate kidney function, the severity of the disease and allocation of kidney transplants, is found to be racially skewed, under-allocating necessary resources to black patients. A study demonstrates that the algorithm took an individual’s race into account, meaning that they were not offered a kidney transplant when […]

Read More

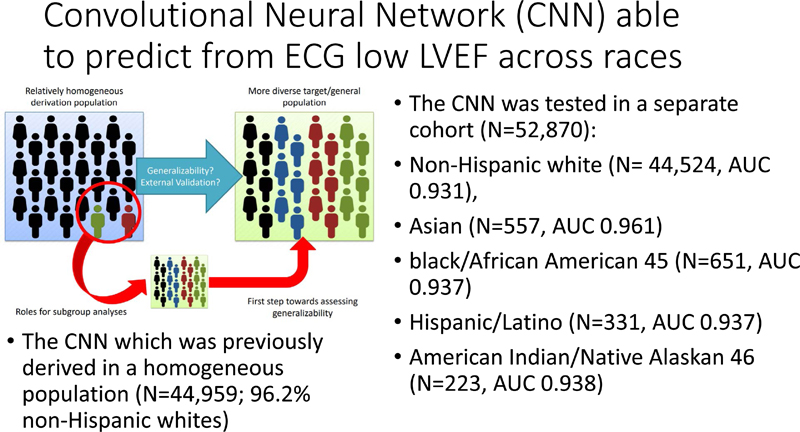

Assessing and Mitigating Bias in Medical Artificial Intelligence

Deep learning algorithms using data from homogeneous populations may be difficult to generalise, and potentially exacerbate racial disparities in health and health care. This research paper explores (1) whether the accuracy of a deep learning algorithm varies depending on race / ethnicity, and (2) whether this performance is down to racial variation or external health […]

Read More

Racial Bias Found in Health Care Company Algorithm

New York news video reporting on the investigation into UnitedHealth Group over allegations that they are utilising a racially biased algorithm. A new study reports that the new AI software leads to lower levels of care for black patients compared to white patients.

Read More

Can AI Tackle Racial Inequalities in Healthcare?

News article, drawing on a study by Nature Medicine, which explains how algorithms might be able to help tackle racial biases within doctors’ own judgements. Doctors’ judgement of how much pain a patient is feeling has been linked to discrimination and racism, with black patients likely to have their pain level underestimated, which can adversely […]

Read More

Health Care AI Systems are Biased

This article explains how bias in AI systems is contributing to the exacerbation of racial health disparities. There is a huge issue with the misrepresentation of our data sets, which could result in health systems that do not correctly identify nor treat illnesses in non-white patients. For example, skin-cancer detection algorithms trained on light-skinned individuals, […]

Read More

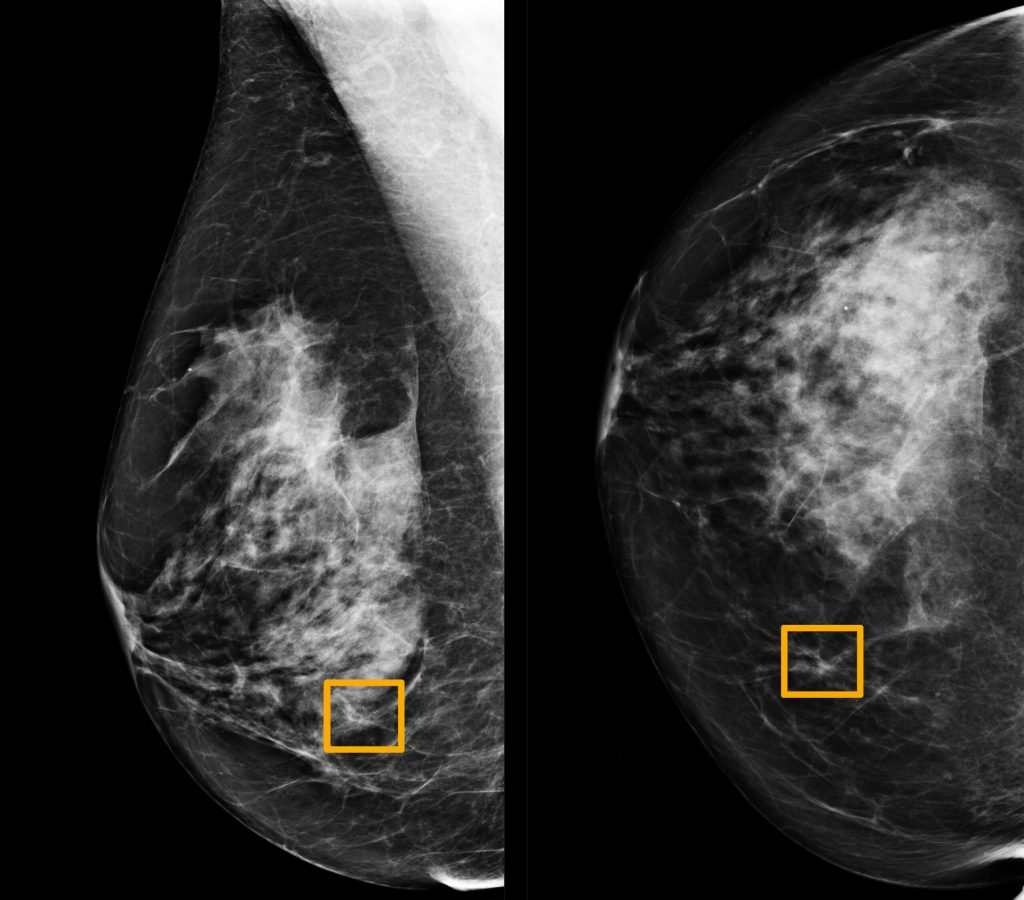

Google’s AI for mammograms doesn’t account for racial differences

A short article examining Google’s new AI for mammograms. It has hopes of replacing human radiologists with faster and more accurate diagnosis. However, there are worries over its accuracy in spotting cancer in diverse racial and ethnic populations, both due to white focused data sets and inherent biases within the healthcare system.

Read More

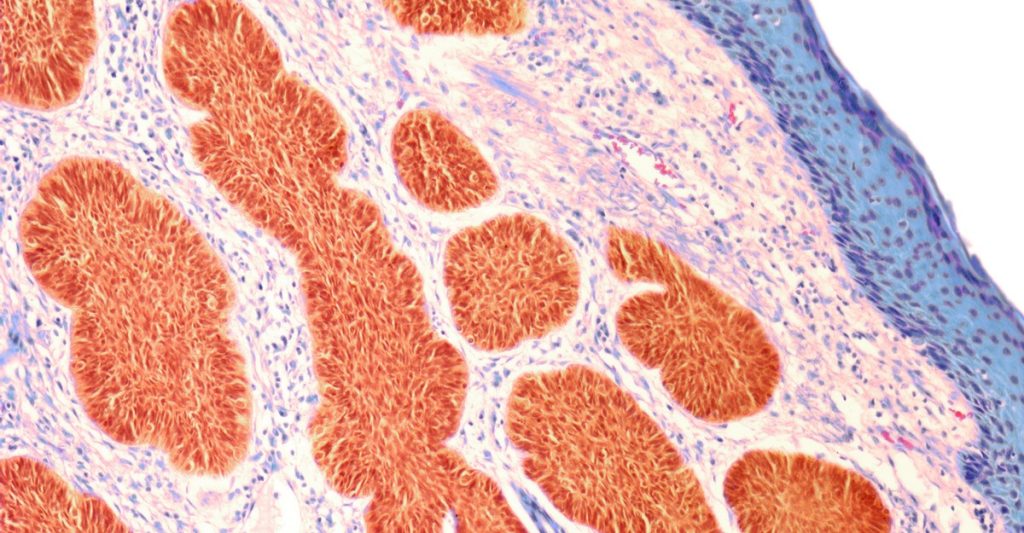

AI reveals differences in appearance of cancer tissue between racial populations

Artificial Intelligence technologies are being used to understand potential differences in prostate cancer tissues between racial populations; cancer tissues manifest differently in black and white patients. This research is revealing the racial bias in AI systems used to diagnose prostate cancer. Algorithmic models are trained on data from majority white populations, which means that prostate […]

Read More

Limiting racial disparities and bias for wearable devices in health science research

Consumer wearables are devices used for tracking activity, sleep, and other health-related outcomes (e.g. Apple Watch, Fitbit, Samsung, Basis, Mio, PulseOn, Who Consumer wearables are devices used for tracking activity, sleep and other health-related outcomes, intended to help people reach their wellness goals. However these wearables are less accurate for people with darker skin tones, […]

Read More

Health Monitoring Devices

Health Monitoring Devices have gained popularity over the past few years, and hold promise in helping people to reach their wellness goals. However, these devices rely on un-representative data-driven algorithms, which leaves ethnic minorities vulnerable to their ineffectiveness.

Read More

Studies find bias in AI models that recommend treatments and diagnose diseases

New research shows that AI models designed for health care settings can exhibit bias against certain ethnic and gender groups. Machine learning models for healthcare hold promise in improving medical treatments by improving predictions of care and mortality, however their black box nature, and bias in training data sets leaves them vulnerable to instead hinder […]

Read More

Dissecting racial bias in an algorithm used to manage the health of populations

Research article examining racial bias in health algorithms. The research shows that a widely used algorithm, which affects millions of patients, exhibits a significant racial bias. There is evidence that Black patients, who are assigned the same level of risk as white patients by the algorithm, are actually much sicker. This racial discrimination is reducing […]

Read More

New Study Blames Algorithm For Racial Discrimination

This article examines a tool created by Optum, which was designed to identify high-risk patients with untreated chronic diseases, in order to redistribute medical resources to those who need them most. Research has shown this algorithm to be biased; it was less likely to admit black people than white people who were equally sick to […]

Read More

Artificial Intelligence in Healthcare: The Need for Ethics

The advent of AI promises to revolutionise the way we think about medicine and healthcare, but who do we hold accountable when automated procedures go awry? In this talk, Varoon focuses on the lack of affordable medicines within healthcare and the concerns over racial bias being brought into the healthcare system.

Read More

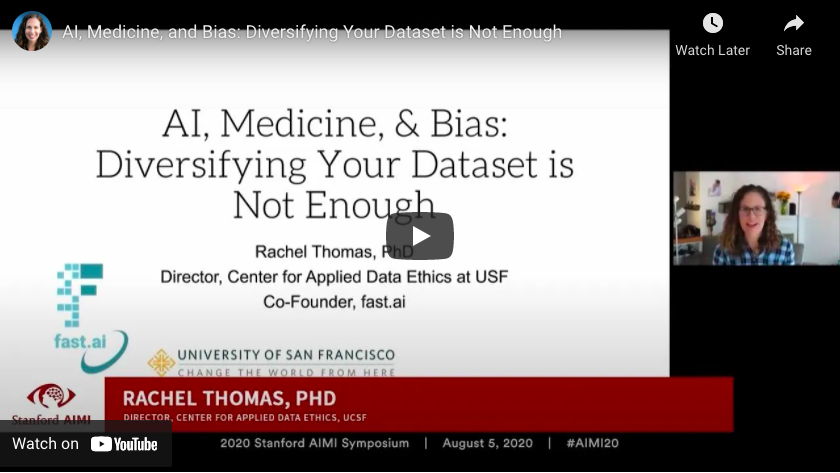

AI, Medicine, and Bias: Diversifying Your Dataset is Not Enough

Using the example of machine learning in medicine as an example, Rachel Thomas examines examples of racial bias within the AI technologies driving modern-day medicines and treatments. Rachel Thomas argues that whilst the diversity of your data set, and performance of your model across different demographic groups is important, this is only a narrow slice […]

Read More

Bias + Artificial Intelligence (in Medicine)

Talk by Rachel Thomas on the prevalence of bias within AI-based technology used in medicine. AI has the potential to remove human biases in the healthcare system, however its integration within medicine could also amplify the existing biases.

Read More

Racial Bias in Science and Medicine: Who’s Included?

A short video examining the lack of inclusion within clinical biomedical research, and the consequence this has on the effectiveness of the treatments and medicines for non-white patients. Lack of research on minority patients means that we do not understand the racial differences in drug response, and so approved medical treatments are excluding a huge […]

Read More

Does “AI” stand for augmenting inequality in the era of covid-19 healthcare?

Artificial intelligence can help tackle the covid-19 pandemic, but bias and discrimination in its design and deployment risk exacerbating existing health inequity argue David Leslie and colleagues Among the most damaging characteristics of the covid-19 pandemic has been its disproportionate effect… A team of medical ethics researchers are arguing that bias and discrimination within AI […]

Read More

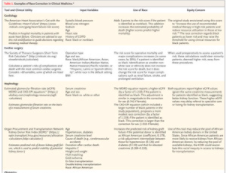

The Need for Use of Race Correction in Clinical Algorithms

Medicine and Society from The New England Journal of Medicine — Hidden in Plain Sight — Reconsidering the Use of Race Correction in Clinical Algorithms Physicians still lack consensus on the meaning of race within medical science; there is an ongoing debate as to whether racial and ethnic categories can reflect underlying population genetics, and […]

Read More

‘Objective’ Science and White Bias: BAME Under-Representation in Biomedical Research (Part 2)

By Amber Roguski. This is the second post in a two-part blog series. It explores the under-representation of Minority Ethnic individuals as participants in biomedical research. This article explores racial bias and exclusion within biomedical research. White People are 87% more likely to be included in medical research than people from a Minority Ethnic Background, […]

Read More

Addressing Bias: Artificial Intelligence in Cardiovascular Medicine

As the leading cause of morbidity and mortality worldwide, cardiovascular disease is prevalent across all populations. Artificial intelligence (AI) is providing opportunities to transform cardiovascular medicine. Medical report which examines the potential of Artificial Intelligence in cardiovascular medicine; it could hugely benefit patient diagnosis and treatment of what is the leading cause of morbidity and […]

Read More

Understanding AI bias in banking

As banks invest in AI solutions, they must also explore how AI bias impacts customers and understand the right and wrong ways to approach it. AI systems could unfairly decline new bank account applications, block payments and credit cards, deny loans, and other vital financial services and products to qualified customers because of how their […]

Read More

Intelligence Unleashed – An argument for education

This research paper gives arguments for how AI can benefit our education system. It argues that AI can support teachers in giving children the best education whilst not taking away from the humanity of it. AI can be beneficial in aspects such as online tutoring, collaborative learning, and tackling achievement gaps. While it does not […]

Read More

Biases are being baked into artificial intelligence

When it comes to decision making, it might seem that computers are less biased than humans. But algorithms can be just as biased as the people who create the… Quick, concise Axios video that describes algorithmic bias, how and why human bias ends up in systems used for hiring and criminal justice among other things.

Read More

Racial Justice: Decode the Default (2020 Internet Health Report)

Technology has never been colourblind. It’s time to abolish notions of “universal” users of software. This is an overview on racial justice in tech and in AI that considers how systemic change must happen for technology to be support equity.

Read More

Trial topic

AI tools are used to spot potentially harmful comments, posts and content and remove them from discussion boards and social media platforms. These tools may often misconstrue language that is culturally different, effectively censoring people’s voices.

Read More

Building The Race and AI Toolkit

If you are reading this, you have been asked to help contribute to, or test this Race and AI Toolkit. Thank you for taking up the mission! Key facts We invite AI, ethics and civil society experts to help with the following: Suggest a Resource to add to the Toolkit Do you know any articles, […]

Read More

Racist Robots? How AI bias may put financial firms at risk

Artificial intelligence (AI) is making rapid inroads into many aspects of our financial lives. Algorithms are being deployed to identify fraud, make trading decisions, recommend banking products, and evaluate loan applications. This is helping to reduce the costs of financial products and improve th… Through a case study of mortgage applications, this article shows how […]

Read More

AI risks replicating tech’s ethnic minority bias across business

Diverse workforce essential to combat new danger of ‘bias in, bias out’ This short article looks at the link between the lack of diversity in the AI workforce and the bias against ethnic minorities within financial services – the “new danger of ‘bias in, bias out’”.

Read More

Black Loans Matter: fighting bias for AI fairness in lending

Today in the United States, African Americans continue to suffer from financial exclusion and predatory lending practices. Meanwhile the advent of machine learning in financial services offers both promise and peril as we strive to insulate artificial intelligence from our own biases baked into the historical data we need to train our algorithms. A detailed […]

Read MoreMeasuring racial discrimination in algorithms

Measuring racial discrimination in algorithms There is growing concern that the rise of algorithmic decision-making can lead to discrimination against legally protected groups, but measuring such algorithmic discrimination is often hampered by a fundamental selection challenge. We develop new quasi-experimental tools to overcome this challenge and measure algorithmic discrimination in the setting of pre-trial bail […]

Read More

Unmasking Facial Recognition | WebRoots Democracy Festival

This video is an in depth panel discussion of the issues uncovered in the ‘Unmasking Facial Recognition’ report from WebRootsDemocracy. This report found that facial recognition technology use is likely to exacerbate racist outcomes in policing and revealed that London’s Metropolitan Police failed to carry out an Equality Impact Assessment before trialling the technology at […]

Read More

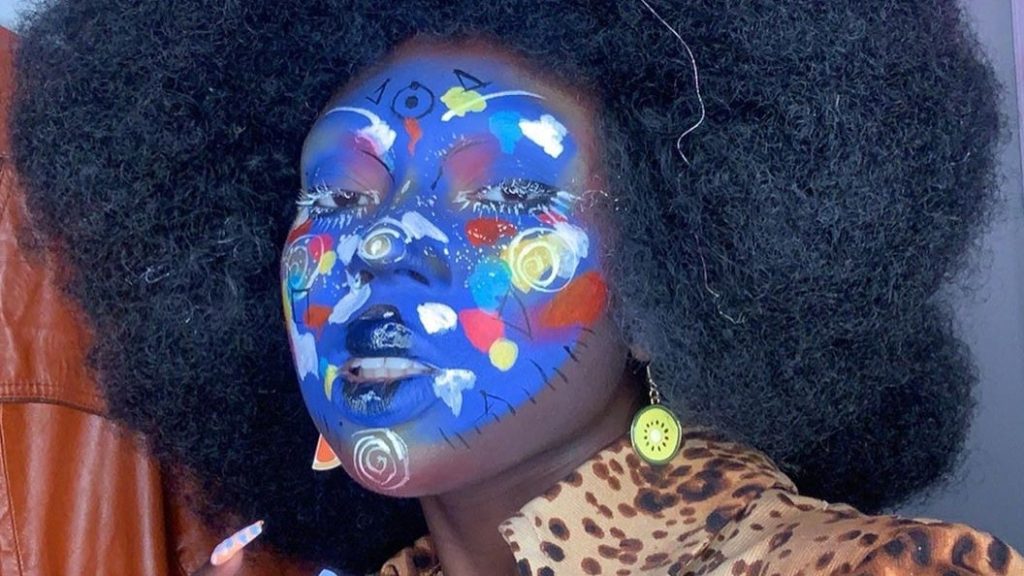

Can make-up be an anti-surveillance tool?

As protests against police brutality and in support of the Black Lives Matter movement continue in the wake of George Floyd’s killing, protection against mass surveillance has become top of mind. This article explains how make-up can be used both as a way to evade facial recognition systems, but also as an art form.

Read More

Machine Bias – There’s software used across the country to predict future criminals and it’s biased against blacks

There’s software used across the country to predict future criminals. And it’s biased against blacks. This is an article detailing a software which is used to predict the likelihood of recurring criminality. It uses case studies to demonstrate the racial bias prevalent in the software used to predict the ‘risk’ of further crimes. Even for […]

Read More

Another arrest, and jail time, due to a bad facial recognition match

A New Jersey man was accused of shoplifting and trying to hit an officer with a car. He is the third known black man to be wrongfully arrested based on face recognition.

Read More

Algorithms and bias: What lenders need to know

Much of the software now revolutionising the financial services industry depends on algorithms that apply artificial intelligence (AI) – and increasingly, machine learning – to automate everything from simple, rote tasks to activities requiring sophisticated judgment. Explains (from a US perspective) how the development of machine learning and algorithms has left financial services at risk […]

Read More

AI Perpetuating Human Bias in the Lending Space

AI was supposed to be the pinnacle of technological achievement — a chance to sit back and let the robots do the work. While it’s true AI completes complex tasks and calculations faster and more accurately than any human could, it’s shaping up to need some supervision. There is data which predicts that the introduction […]

Read More

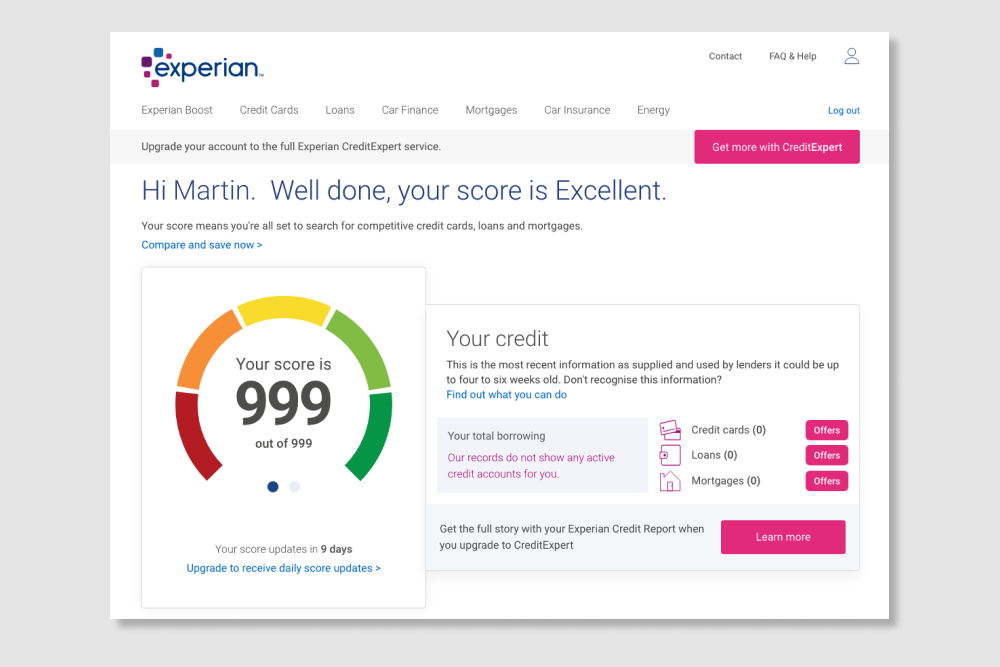

Reducing bias in AI-based financial services

The impact of artificial intelligence in consumer lending. This US focused report considers four distinct ways of incorporating Artificial Intelligence into credit lending. It highlights the existing racial bias in credit scores, where white / non-hispanic individuals are likely to have a much higher credit score than black / African American individuals. And argues that […]

Read MoreClimate Change and Social Inequality

UN Working Paper evidence base for conceptual framework of cyclic relationship between climate change and social inequality.

Read More

Credit Scoring and Interest Rates

Minority groups are often treated as high risk (more likely to not pay money back) because of the use of proxy data in financial institutions. This means that people from minority ethnic groups can find it hard to get credit, and get offered higher interest rates, widening poverty gaps.

Read More

Access to Bank Accounts, Loans and Mortgages

Algorithms can be used to decide when to withhold loans, mortgages and even bank accounts, on the basis of who is likely to make money for the bank. Minority ethnic groups can be disproportionately disadvantaged within these prediction systems by being determined as not meeting the criteria for lending, even when others with the same financial status get approved.

Read MoreEvaluating neural toxic degeneration in language models

Real toxicity prompts Pre-trained neural language models (LMs) are prone to generating racist, sexist, or otherwise toxic language which hinders their safe deployment. We investigate the extent to which pre-trained LMs can be prompted to generate toxic language, and the effectiveness of controllable text generation algorithms at preventing such toxic degeneration This paper highlights how […]

Read More

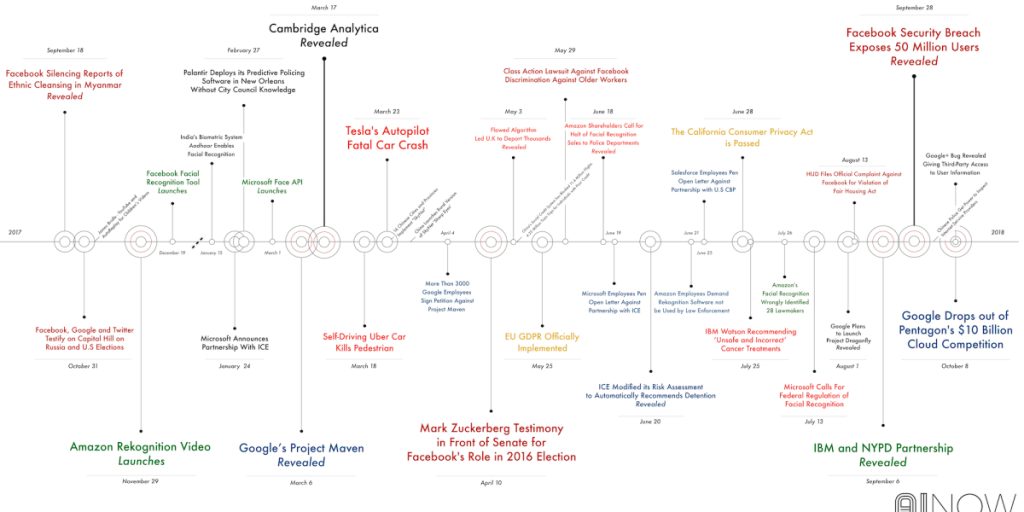

Google hired Timnit Gebru to be an outspoken critic of unethical AI. Then she was fired for it.

Timnit Gebru and Google Timnit Gebru is one of the most high-profile Black women in her field and a powerful voice in the new field of ethical AI, which seeks to identify issues around bias, fairness, and responsibility. Google hired her, then fired her. This article argues that leading AI ethics researchers, such as Timnit […]

Read More

Face-scanning algorithm increasingly decides whether you deserve the job

HireVue claims it uses artificial intelligence to decide who’s best for a job. Outside experts call it “profoundly disturbing.” An article about HireVue’s “AI-driven assessments”. More than 100 employers now use the system, including Hilton and Unilever, and more than a million job seekers have been analysed.

Read More

Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification

Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification – MIT Media Lab Recent studies demonstrate that machine learning algorithms can discriminate based on classes like race and gender. In this work, we present an approach to e… ccording to this paper researchers from MIT and Stanford University, three commercially released facial-analysis programs from major […]

Read More

Amazon scraps AI recruiting tool showing bias against women

In 2018, Amazon’s use of AI for hiring was discovered to favour male job candidates, because its algorithms had been trained on 10 years’ worth of internal data that heavily skewed male. The algorithm was trained, in effect, to believe that male candidates were better than female candidates.

Read More

This tech startup uses AI to eliminate all hiring biases

This video argues that hiring is largely analogue and broken. This leads to major problems such as inefficiency, ineffectiveness (50% of first-year hires fail), poor candidate experience, and lack of diversity. The hiring process is plagued by gender bias, age bias, socioeconomic bias, and racial bias. Pymetrics intentionally audits algorithms to weed out unconscious human […]

Read More

How to use AI hiring tools to reduce bias in recruiting

From machine learning tools that optimize job descriptions, to AI-powered psychological assessments of traits like fairness, here’s a look at the strengths – and pitfalls – of AI Commercial AI recruitment systems cannot always be trusted to be effective in doing what the vendors say they do. The technical capabilities this type of software offers […]

Read More

Using AI to eliminate bias from hiring

AI could eliminate unconscious bias and sort through candidates in a fair way. Many current AI tools for recruiting have flaws, but they can be addressed. The beauty of AI is that we can design it to meet certain beneficial specifications. A movement among AI practitioners like OpenAI and the Future of Life Institute is […]

Read More

Rights group files federal complaint against AI-hiring firm HireVue, citing ‘unfair and deceptive’ practices

AI is now being used to shortlist job applicants in the UK — let’s hope it’s not racist AI-based video interviewing software such as those made by HireVue are being used by companies for the first time in job interviews in the UK to shortlist the best job applicants. HireVue’s “AI-driven assessments,” which more than […]

Read More

When your resume is (not) turning you down: Modelling ethnic bias in resume screening

Resume screening is the first hurdle applicants typically face when they apply for a job. Despite the many empirical studies showing bias at the resume‐screening stage, fairness at this funnelling st… CVs are worldwide one of the most frequently used screening tools. CV screening is also the first hurdle applicants typically face when they apply […]

Read More

In the Covid-19 jobs market, biased AI is in charge of all the hiring

As millions of people flood the jobs market, companies are turning to biased and racist AI to sift through the avalanche of CVs As millions of people flood the jobs market, companies are turning to “biased and racist” AI to sift through the avalanche of CVs. This can lock certain groups out of employment and […]

Read More

These robots handle dull and dangerous work humans do today — and can create new jobs

Avidbots, iUNU and 6 River Systems are among the start-ups on CNBC’s Upstart 100 list that are putting robots to work doing “dull, dirty and dangerous” tasks previously handled by humans. This article highlights the benefits of using AI and robots in certain work situations.

Read More

PwC facial recognition tool criticised for home working privacy invasion

Accounting giant PwC has come under fire for the development of a facial recognition tool that logs when employees are absent from their computer screens while they work from home. The technology, which is being developed specifically for financial institutions, recognises the faces of workers via t… PwC has come under fire for the development […]

Read More

Workforce View 2020

High definition research into employee attitudes Comprehensive report on worker sentiment about the general outlook for the workplace and the long-term prospects for the jobs they’re holding. High levels of anxiety in workers due to automation.

Read More

AI will impact future of jobs

Will AI eliminate more jobs than it creates? Experts weigh in on a hot topic that impacts almost every industry. The impact of AI on future jobs.

Read More

An AI expert told ’60 Minutes’ that AI could replace 40% of jobs

Artificial intelligence can replace repetitive tasks, but it doesn’t have the empathy to lead. View of an AI expert on human job loss. Provides an anecdotal view from an AI expert on what jobs are already being displaced with AI and automation.

Read More

The Future of Jobs Report 2020

After years of growing income inequality, concerns about technology-driven displacement of jobs, and rising societal discord globally, the combined health and economic shocks of 2020 have put economies into freefall, disrupted labour markets and fully revealed the inadequacies of our social contract… Comprehensive report on the future of work, increase of automation/digitalisation of tasks and […]

Read More

Lack of Diversity in AI Workforce

There is a very low representation of minority ethnic groups in the population of people employed in AI. This is a huge cause for concern since without a diverse set of AI creators, our AI models are bound to be prone to blind spots that bias against and ultimately harm minority ethnic groups.

Read More

Face-Scanning

Computer vision technology have a harder time recognising people with darker skin or from asian background. One main reason for this is that the datasets used by companies to train facial analysis software use mainly white examples and are not truly representative of diversity in society.

Read More

CV Screening

AI for recruiting is the application of artificial intelligence (such as machine learning, natural language processing, and sentiment analysis) to the recruitment function. It has the potential to mitigate, but is also likely to amplify bias.

Read More

Job Creation

AI has the potential to unlock new jobs – by allowing activities that are dangerous for humans or not even possible today, for example deep sea diving or space exploration.

Read More

Job Enhancement

AI-equipped robots will be able to perform dangerous or repetitive physical tasks, thus creating safer workplaces (e.g., wearable robots that support human strength may prevent musculoskeletal disorders).

Read More

Job Replacement

AI-assisted automation of tasks will eliminate jobs in an uneven way across ethnic groups, further marginalising groups that are already at risk of lower pay and career advancement.

Read More

The Misinformation Edition of the Glass Room

The Misinformation Edition of the Glass Room is an online version of a physical exhibition that explores different types of misinformation, teaches people how to recognise it and combat its spread.

Read More

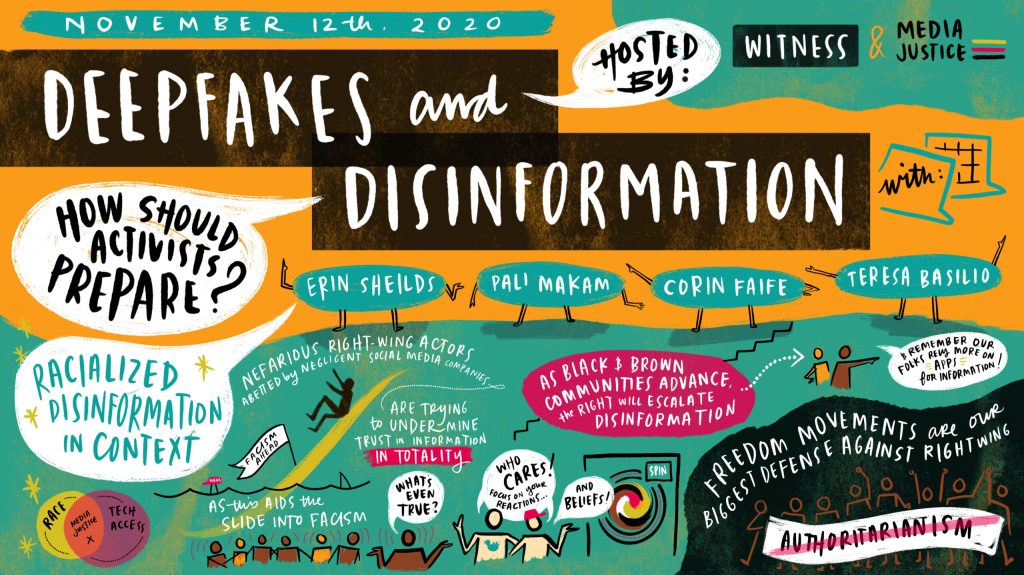

Deepfakes and Disinformation

Earlier this month, in the aftermath of a decisive yet contested election, MediaJustice, in partnership with MediaJustice Network member WITNESS, brought together nearly 30 civil society groups, researchers, journalists, and organizers to discuss the impacts visual disinformation has had on institutions and information systems. Deepfakes and disinformation can be used in used in racialised disinformation […]

Read More

Software that monitors students during tests perpetuates inequality and violates their privacy

In an opinion piece by a University Librarian, he claims that millions of algorithmically proctored (invigilated) tests are happening every month around the world, increasing exponentially during the pandemic. In his experience algorithmic ‘proctoring’ reinforces white supremacy, sexism, ableism, and transphobia, invades students’ privacy and is often a civil rights violation.

Read More

Remote testing monitored by AI is failing the students forced to undergo it

An opinion piece in which examples are given of students who have been highly disadvantaged by exam software, including a muslim woman forced to remove her hijab by software, in order to prove she is not hiding anything behind it.

Read More

Exams that use facial recognition may be ‘fair’ – but they’re also intrusive

News article which argues that whilst AI facial recognition during exams might be fair, it is both an invasion of privacy and is at risk of bringing unwarranted biases.

Read More

Beyond gadgets: EdTech to help close the attainment gap

A video overview of a report advocating for the use of edtech, or education technology, which includes many AI solutions, in order to close the “Opportunity Gap” between marginalised and “mainstream” pupils.

Read More

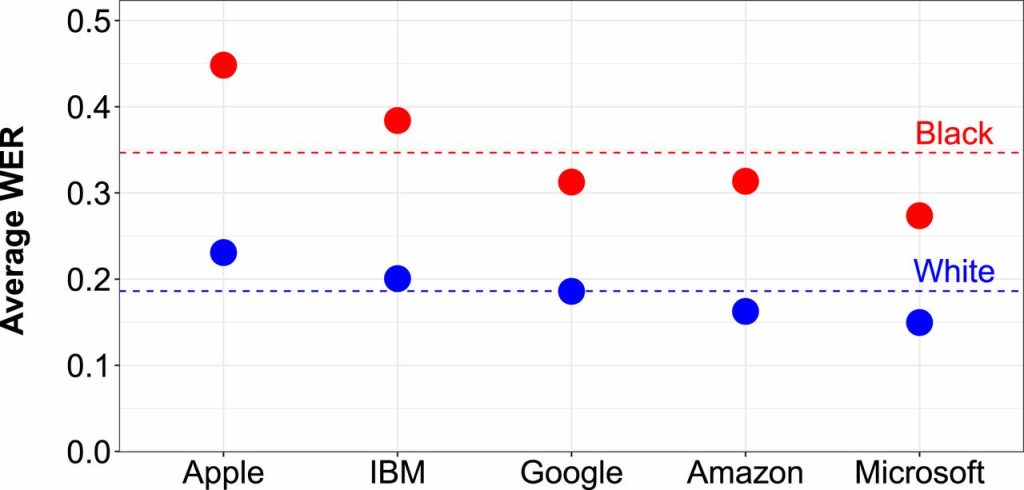

Speech recognition in education: The powers and perils

Weighing up the huge potential of voice recognition technology to gain insights into children’s language and reading development, against a difference of 16% in misidentified words between white and black voices.

Read More

AI teachers may be the solution to our education crisis

This article looks at the global shortage of teachers and how AI might be used to supplement and provide lacking education, and argues that it could be less biased than teachers, thereby resolving inequity.

Read More

Can computers ever replace the classroom?

This article considers the various ways AI can be used during the pandemic to boost virtual learning, focusing on Chinese company Squirrel AI who are reporting good results with computer tutors and personalised learning, and weighing up the risks, such as surveillance of Muslim Uighurs in Xinjiang.

Read More

In Hong Kong, this AI Reads children’s emotions as they learn…

Facial recognition AI, combined with other AI assessment, is used to spot how children are performing and boost their performance. However, there is concern that it may not work so well for students with non-Chinese ethnicities who were not part of the training data.

Read More

AI is coming to schools, and if we are not careful, so will it’s biases

This article looks at what issues may arise for children from minority and underprivileged communities from replacing teachers with AI.

Read More

Flawed Algorithms are Grading Millions of Students Essays

Automated essay grading in the US has been shown to mark down African American students and those from other countries.

Read More

Solving The Problem Of Algorithm Bias In AI-Based Learning

This white paper takes a deeper dive into the data and algorithm used to underestimate the pass rate of students of certain nationalities, looking at how data and modelling can lead to bias.

Read More

Student Predictions & Protections: Algorithm Bias in AI-Based Learning

This short article gives an example of how predictive algorithms can penalise underrepresented groups of people. In this example, students from Guam had their pass rate underestimated versus other nationalities, because of the low number of students in the data set used to build the prediction model, resulting in insufficient accuracy.

Read More

Inbuilt biases and the problem of algorithms

This article details the algorithm used to inform A Level results for students who could not take exams due to the 2020 pandemic. The algorithm took into account the postcode of the student, which meant that students from lower income areas were more likely to have their grade reduced whilst students in high-income areas were […]

Read More

Algorithms can drive inequality. Just look at Britain’s school exam chaos

An outcry over alleged algorithmic bias against pupils from more disadvantaged backgrounds has now left teenagers and experts alike calling for greater scrutiny of the technology.

Read More

Postcode or performance: How the A Level results of 2020 exposed a broken system

Case study explaining algorithm bias inherent in grade prediction for A Level students. Demonstrates the physical impact AI can have, if not scrutinised for bias.

Read More

The problem with algorithms: magnifying misbehaviour

This news example gives an example of bias present in an algorithm governing the first round of admissions into a medical university. The data used to define the algorithms output showed bias against both females and people with non-European-looking names.

Read More

How will artificial intelligence change admissions?

An article detailing how AI might change admissions in terms of the process, the consequences and how students from some countries could be at risk of bias.

Read More

Mary Madden on Algorithmic Bias in College Admissions

A good introductory video to the use of AI in college admissions. Questioning at what point it is acceptable to completely remove the human oversight in admissions.

Read More

AI in immigration can lead to ‘serious human right breaches’

This video refers to a report from the University of Toronto’s Citizen Lab that raises concerns that the handling of private data by AI for immigration purposes could breach human rights. As AI tools are trained using datasets, before implementing those tools that target marginalized populations, we need to answer questions such as: Where does […]

Read More

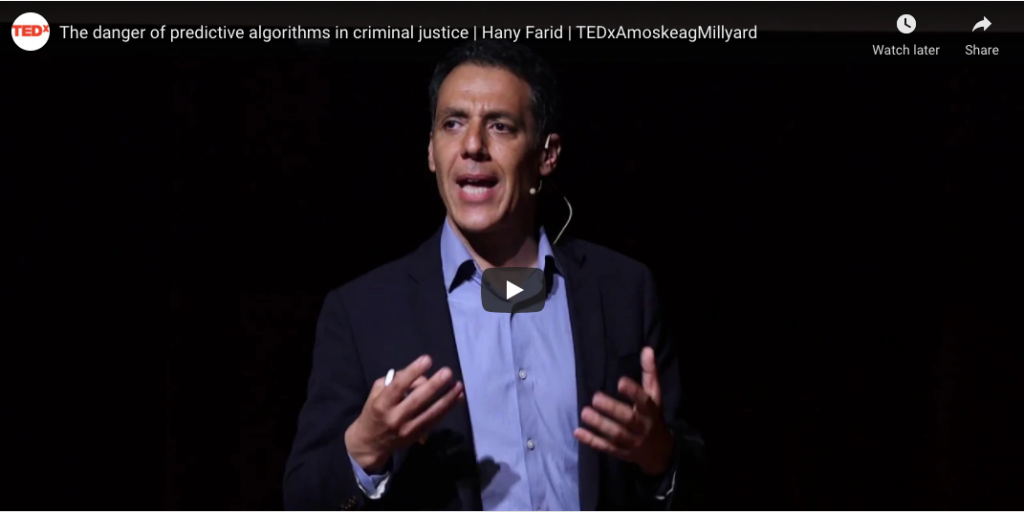

The danger of predictive algorithms in criminal justice

Dartmouth professor Dr. Hany Farid reverse engineers the inherent dangers and potential biases of recommendations engines built to mete out justice in today’s criminal justice system. In this video, he provides an example of how the number of crimes is used as proxy for race.

Read More

How AI Could Reinforce Biases In The Criminal Justice System

Whilst some believe AI will increase police and sentencing objectivity, others fear it will exacerbate bias. For example, the over-policing of minority communities in the past has generated a disproportionate number of crimes in some areas, which are passed to algorithms, which in turn reinforce over-policing.

Read More

Coded Bias: When the Bots are Racist – new documentary film

This film cuts across all areas of potential racial bias in AI in an engaging documentary film format.

Read More

Using AI in the examination process

Technology to protect remote exam taking against fraud has quickly become necessary as exams move online. Combinations of machine learning algorithms, facial recognition technology and eye tracking software are used to make sure that the person taking the exam is who they say they are and identify cheating.

Read More

How AI applications are used to aid learning

There are many types of different exciting AI applications being used to enhance learning in the class room, from automated ‘smart tutors’ who can assess pupil performance more accurately tailor learning interventions than humans, to facial recognition cameras which can assess pupils’ understanding through analysing their facial expressions.

Read More

Using AI systems in school admissions

A range of different ways to make decisions about admissions to universities and schools are being used, including algorithms which use data pulled from students social media channels. There is concern that the data used will be biased against ethnic minorities due to the smaller amounts of data available and human bias used in creating the models.

Read More

Using algorithms to predict and award grades and exam results

In 2020 the use of algorithms to replace exams to determine results hit the headlines when it was shown that they penalised students from state schools and low inclome postcodes.

Read More

Visualise Climate Change – interactive website

Our project aims to raise awareness and conceptual understanding of climate change by bringing the future closer. Conceptual interactive website to show precise and personalised impacts of climate change using AI and climate modelling. Bringing together researchers from different fields, the website aims to act as an educational tool that will produce accurate and vivid […]

Read MoreUsing Simulated Data to Generate Images of Climate Change

A case study from ResearchGate We present a project that aims to generate images that depict accurate, vivid, and personalized outcomes of climate change using Cycle-Consistent Adversarial Networks (CycleGANs). Case Study: Explores the potential of using images from a simulated 3D environment to improve a domain adaptation task carried out by the MUNIT architecture, aiming […]

Read More

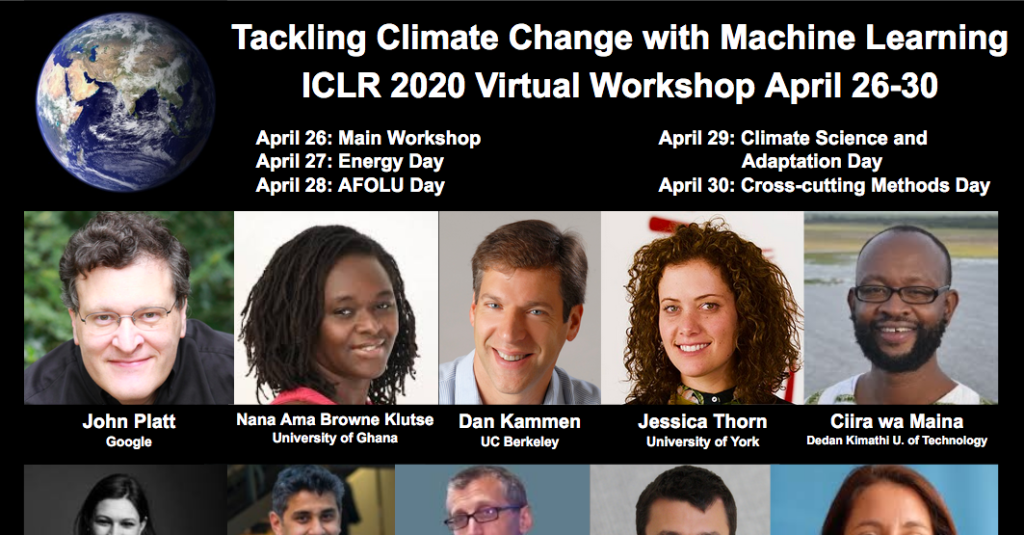

Why the Climate Change AI Community Should Care About Weather: A New Approach for Africa

Case Study: Community perspective of the game-changing socio-economic value that could be achieved with better forecasts, especially among vulnerable communities. The paper presents a new way to view this opportunity by better understanding the problem, with the goal of inspiring the Climate Change AI community to contribute to this important aspect of the climate adaptation […]

Read More

Using Satellite Images and Artificial Intelligence to Improve Agricultural Resilience

Case Study: Most of Rwanda’s crop production comes from smallholder farms. The country’s agriculture officials have historically had insufficient data on where crops are cultivated or how much yield to expect — a hindrance for government’s future planning. Building on previous work with emerging technologies, machine learning, economics, and agriculture, the paper develops a new […]

Read More

How Flood Mapping From Space Protects The Vulnerable And Can Save Lives

Case Study: pioneering flood mapping and response organization that uses high-cadence, high-resolution satellite imagery to build country-wide flood monitoring and dynamic analytics systems for the most vulnerable around the world.

Read More

AI and Climate Change; The Promise, the Perils and Pillars for Action

The paper provides pillars of action for the AI community, and includes a focus on climate justice where the author recommends that environmental impacts should not be externalised onto the most marginalised populations, and that the gains are not only captured by digitally mature countries in the global north. This will require centring front-line communities […]

Read More

Apprise: Using AI to unmask situations of forced labour and human trafficking

The creators of the Apprise app share how they created a system assist workers in Thailand to avoid vulnerable situations. Forced labour exploiters continually tweak and refine their own practices of exploitation, in response to changing policies and practices of inspections.The article showcases efforts to create AI tools that predict changing patterns of human exploitation. […]

Read More

AI can be sexist and racist — it’s time to make it fair

Computer scientists must identify sources of bias, de-bias training data and develop artificial-intelligence algorithms that are robust to skews in the data. The article raises the challenge of defining fairness when building databases. For example, should the data be representative of the world as it is, or of a world that many would aspire to? […]

Read More

Establishing an AI code of ethics will be harder than people think

Over the past six years, the New York City police department has compiled a massive database containing the names and personal details of at least 17,500 individuals it believes to be involved in criminal gangs. The effort has already been criticized by civil rights activists who say it is inaccurat… The New York police department has […]

Read More

We tested Europe’s new lie detector for travellers – and immediately triggered a false positive

4.5 million euros have been pumped into the virtual policeman project meant to judge the honesty of travelers. An expert calls the technology “not credible.” IBorderCtrl’s lie detection system was developed in England by researchers at Manchester Metropolitan University. It claims that its virtual cop can detect deception by picking on the micro gestures the […]

Read More

The danger of predictive algorithms in criminal justice

A study on the discriminatory impact of algorithms in pre-trial bail decisions.

Read More

Racial disparities in automated speech recognition

Racial disparities in automated speech recognition Automated speech recognition (ASR) systems are now used in a variety of applications to convert spoken language to text, from virtual assistants, to closed captioning, to hands-free computing. By analyzing a large corpus of sociolinguistic interviews with white and African American speakers, we demo… Analysis of five state-of-the-art automated […]

Read More

Adjudicating by Algorithm, Regulating by Robot

Sophisticated computational techniques, known as machine-learning algorithms, increasingly underpin advances in business practices, from investment banking to product marketing and self-driving cars. Machine learning—the foundation of artificial intelligence—portends vast changes to the private sect… This article highlights the benefits of artificial intelligence in adjudication and making law in terms of improving accuracy, reducing human biases […]

Read More

Artificial intelligence in the courtroom

The impact of AI on litigation. The current use of AI in reviewing documents, predicting outcome of cases and predicting success rates for lawyers. This article highlights concerns about fallibility and the need of human oversight.

Read More

How AI is impacting the UK’s legal sector

We examine the impact of artificial intelligence on the UK’s legal sector

Read More

Six ways the legal sector is using AI right now

Law Society partner and equity crowdfunding platfrom Seedrs explains how developments within AI are taking law firms and solicitors to the next level. A article on how AI can be used in adjudication and law in general. It highlights that although AI has vast potential, there is not a broad adoption so far.

Read MoreUnmasking Facial Recognition

Facial recognition is not the next generation of CCTV. Whilst CCTV takes pictures, facial recognition takes measurements. Measurements of the distance between your eyes, the length of your nose, the shape of your face. In this sense, facial recognition is the next generation of fingerprinting. It is a highly intrusive form of surveillance which everyone […]

Read More

Facial recognition could stop terrorists before they act

In their zeal and earnest desire to protect individual privacy, policymakers run the risk of stifling innovation. The author makes the case that using facial recognition to prevent terrorism is justified as our world is becoming more dangerous every day; hence, policymakers should err on the side of public safety.

Read More

Is police use of face recognition now illegal in the UK?)

The UK Court of Appeal has determined that the use of a face-recognition system by South Wales Police was “unlawful”, which could have ramifications for the widespread use of such technology across the UK. The UK Court of Appeal unanimously decided against a face-recognition system used by South Wales Police.

Read More

UK police adopting facial recognition, predictive policing without public consultation

UK police forces are largely adopting AI technologies, in particular facial recognition and predictive policing, without public consultation. This article alerts about UK police using facial recognition and predictive policing without conducting public consultations. It also calls for transparency and input from the public about how those technologies are being used.

Read MoreData Analytics and Algorithmic Bias in Policing

Algorithms used for predictive policing rely on datasets which are inherently biased because of historically over- or under-policing certain communities. This results in the amplification of those biases.

Read More

Decision Making in the Age of the Algorithm

A good summary of the differences between predictive analytics – used in AI – and traditional methods, in terms of methods and impact.

Read More

‘Fake news’: Incorrect, but hard to correct. The role of cognitive ability on the impact of false information on social impressions

The present experiment examined how people adjust their judgment after they learn that crucial information on which their initial evaluation was based is incorrect. In line with our expectations, the results showed that people generally do adjust their attitudes, but the degree to which they correct their assessment depends on their cognitive ability. A study […]

Read More

Responsible AI for Inclusive, Democratic Societies: A cross-disciplinary approach to detecting and countering abusive language online

Research paper about responsible AI Toxic and abusive language threaten the integrity of public dialogue and democracy. In response, governments worldwide have enacted strong laws against abusive language that leads to hatred, violence and criminal offences against a particular group. The responsible (i.e. effective, fair and unbiased) moderation of abusive language carries significant challenges. Our […]

Read MoreLet’s Talk About Race: identity, chatbots, and AI

A research paper about race and AI chatbots Why is it so hard for AI chatbots to talk about race? By researching databases, natural language processing, and machine learning in conjunction with critical, intersectional theories, we investigate the technical and theoretical constructs underpinning the problem space of race and chatbots. This paper questions how to […]

Read MoreRacial bias in hate speech and abusive language detection datasets

A paper on racial bias in hate speech Technologies for abusive language detection are being developed and applied with little consideration of their potential biases. We examine racial bias in five different sets of Twitter data annotated for hate speech and abusive language. Tweets written in African-American English are far more likely to be automatically […]

Read MoreRisk of racial bias in hate speech detection

Risk of racial bias in hate speech detection This research paper investigates how insensitivity to differences in dialect can lead to racial bias in automatic hate speech detection models, potentially amplifying harm against minority populations.

Read More

The algorithms that detect hate speech online are biased against black people

The algorithms that detect hate speech online are biased against black people A new study shows that leading AI models are 1.5 times more likely to flag tweets written by African Americans as “offensive” compared to other tweets.

Read More

Abolish the #TechToPrisonPipeline

Abolish the #TechToPrisonPipeline Crime-prediction technology reproduces injustices & causes real harm. The open letter highlights why crime predicting technologies tend to be inherently racist.

Read More

AI researchers say scientific publishers help perpetuate racist algorithms

AI researchers say scientific publishers help perpetuate racist algorithms The news: An open letter from a growing coalition of AI researchers is calling out scientific publisher Springer Nature for a conference paper it reportedly planned to include in its forthcoming book Transactions on Computational Science & Computational Intelligence. The paper, titled “A Deep Neural… Crime […]

Read More

Google think tank’s report on white supremacy says little about YouTube’s role in people driven to extremism

A Google-funded report examines the relationship between white supremacists and the internet, but it makes scant reference—all of it positive—to YouTube, the company’s platform that many experts blame more than any other for driving people to extremism. YouTube’s algorithm has been found to direct users to extreme content, sucking them into violent ideologies.

Read More

Is Facebook Doing Enough To Stop Racial Bias In AI?

After recently announcing Equity and Inclusion teams to investigate racial bias across their platforms, and undergoing a global advertising boycott over alleged racial discrimination, is Facebook doing enough to tackle racial bias? Disinformation driven via bots that game the AI systems of social media platforms to reinforce racial myths and attitudes as well as the […]

Read More

Discrimination through optimization: How Facebook’s ad delivery can lead to skewed outcomes

The enormous financial success of online advertising platforms is partially due to the precise targeting features they offer. Although researchers and journalists have found many ways that advertisers can target – or exclude – particular groups of users seeing their ads, comparatively little attenti… Ad-delivery is controlled by the advertising platform (eg. Facebook) and researchers […]

Read More

AI biased new media generation

Yeah, great start after sacking human hacks: Microsoft’s AI-powered news portal mixes up photos of women-of-color in article about racism. Blame Reg Bot 9000 for any blunders in this story, by the way News media is being automated and generated by AI which can incur bias from data sets for text generation.

Read More

Twitter image cropping

Another reminder that bias, testing, diversity is needed in machine learning: Twitter’s image-crop AI may favour white men, women’s chests Digital imagery favours white people in framing and de-emphasises the visibility of non-white people. Strange, it didn’t show up during development, says social network

Read More

AI in Policing

AI tools are developed with the aim of preventing crime with tools such as computer vision, pattern recognition, and the use of historical data to create crime maps, locations with higher risks of offence.

Read More

AI in Immigration

AI is used to help with border control as well as analyse immigration and visitor applications. The implementation so far has flagged the encoding of unfair treatment of individual visa applications based on the person’s country of origin.

Read More

AI in Human Rights

AI tools can be helpful in the fight against human rights issues such as terrorism and human trafficking, but privacy rights are a problem.

Read More

AI Making Legal Judgements

Algorithms become the arbiters of determinations about individuals (e.g. government benefits, granting licenses, pre-sentencing and sentencing, granting parole).

Read More

New Medicines and Treatments

Modern medicines and treatments can be improved with the progression of AI in medicine by discovering new drugs, personalising treatments, and speeding up chemical trials. But if the racial exclusion common in biomedical research seeps into the data behind AI there’s a risk that these medicines wont be effective for everyone.

Read More

Diagnosis

AI racial bias has extended directly into vital aspects of patient care. With greater rates of misdiagnosis and underdiagnoses of skin cancers in the non-white populations, this is a prime example of AI systems adding to the global burden of health disparities which significantly impact minority groups.

Read More

Resource Management

AI can help to improve resource management within the health care system, by locating gaps in the care system, rebalancing resources, and reviewing patient data to identify priority care patients. But how do we ensure that AI systems distribute resources and care fairly? Several case studies show how bias seeping into AI technologies is underserving black and ethnic minority patients.

Read More

Psychological Distance to Climate Impacts

AI methods can be used to reduce the psychological distance to climate impacts in groups who are not actively experiencing the negative effects of climate change in their daily life.

Read More

Distribution of Climate Change Solutions

A benefit of using AI methods in climate change solutions is that the potential for remote collection of data and analysis in the cloud allows for far-reaching geographic applications of solutions.

Read More

Climate Change and Social Inequalities

The relationship between climate change and social inequalities is a vicious cycle. Initial inequality causes disproportionate disadvantage from the effects of climate change to certain groups, resulting in further inequality.

Read More

Health and Social Care

AI has the potential to vastly improve our health care services by improving the efficiency of diagnosis, streamlining the allocation of resources, and identifying new medicines and treatments. But these AI technologies also risk increasing existing health disparities by discriminating against marginalised groups.

Read More

Employment

AI can take away AND create jobs. How can it be used responsibly for greater equality at the workplace – to help, not hinder?

Read More

Digital and Social Media

Explore the influence of AI in digital and social media: content automation and moderation, image editing, facial recognition and news feed visibility are a few topics we touch on.

Read More

Climate Change

How does AI relate to climate change? Does it offer challenges or solutions? We look into these questions from a standpoint of race, geography, social status and mental distance to the issue.

Read More

Content Moderation on News and Social Media

AI tools are used to spot potentially harmful comments, posts and content and remove them from discussion boards and social media platforms. These tools may often misconstrue language that is culturally different, effectively censoring people’s voices.

Read More

Misinformation and News Feeds

Algorithms are used in social media platforms like Facebook to select what to make most visible in people’s news feeds, reinforcing what they already consume based on their profile and interests. This can be gamed to deliberately show misleading information to serve various agendas.

Read More

Google Cloud’s image tagging AI

Google Cloud’s AI recog code ‘biased’ against black people – and more from ML land Including: Yes, that nightmare smart toilet that photographs you mid… er, process Digital imagery tagging provides negative context for non white people.

Read More

Facial recognition

Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification Recent studies demonstrate that machine learning algorithms can discriminate based on classes like race and gender. In this work, we present an approach to evaluate bias present in automated facial… Commercial AI facial recognition systems tend to misclassify darker-skinned females more than any other group (lighter-skinned […]

Read More

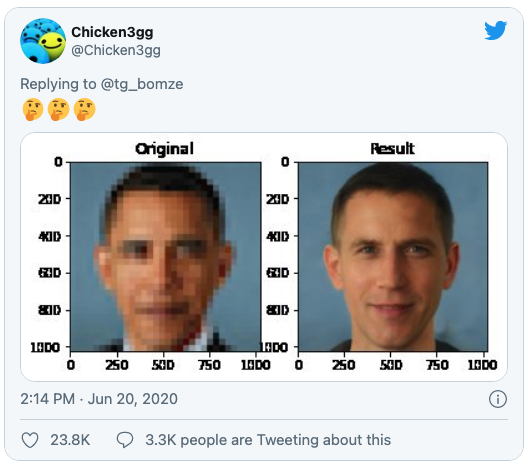

Image processing

Once again, racial biases show up in AI image databases, this time turning Barack Obama white Researchers used a pre-trained off-the-shelf model from Nvidia. Digital imagery tagging provides negative context for non white people.

Read More

Automated Content Generation

New AI tools are trained to generate new written content, like articles or image descriptions. This new text reflects the language the AI has been trained on, and can mirror biases in the training data and text sources.

Read More

Image Manipulation and Tagging

When AI is used for changing an image or video file, any bias built in can adapt that image unfavourably: favouring lighter skinned people and reducing the visibility of darker skinned people. This gives a false impression of reality through an apparent reality-based medium (seeing is believing).

Read More